Fake mechanic reviews are common enough that a smart driver should assume some “too perfect” feedback is manufactured—then verify what’s real before trusting it. The fastest way to spot them is to check for specific repair evidence, natural detail, and consistent reviewer behavior across time and platforms.

Many people rely on fake shop reviews when picking an auto repair shop, because ratings feel like shortcuts—yet shortcuts can be exploited by competitors, agencies, or even well-meaning owners who “push” feedback too hard.

Beyond avoiding deception, you can also use patterns in reviews to reduce uncertainty: identify whether complaints point to one-off mistakes or a systemic issue, and whether praise reflects measurable value rather than empty hype.

Giới thiệu ý mới: Below is a practical, step-by-step system that treats reviews like evidence—so you can choose a shop with confidence, not luck.

What are fake shop reviews in auto repair, and why do they happen?

Fake mechanic reviews are reviews that misrepresent the reviewer’s real experience—often written by someone who never used the shop, or written to distort reputation through exaggerated praise or strategic criticism. To begin, it helps to understand the incentives behind them.

Next, think of fake reviews as “reputation manipulation,” not just lying: the goal is to shape your decision by controlling what feels visible, recent, and credible. That often shows up in either extreme positivity (“best ever, flawless”) or targeted negativity (“scam, never go”), with little verifiable repair detail.

Who creates them (and what motives matter to you)?

In practice, fake reviews can be created by a shop owner, an employee, a marketing agency, a competitor, or a paid “review farm.” What matters for drivers is the pattern: coordinated posting bursts, repeated language, and reviewer profiles that look like shells.

Specifically, the most damaging scenario for consumers is when fake reviews hide risk—masking poor diagnostics, surprise charges, or inconsistent workmanship—because you’re not just overpaying; you’re risking safety and downtime.

Why auto repair is uniquely vulnerable to review manipulation

Auto repair is an “information-asymmetry” service: most customers can’t easily verify whether a repair was necessary, whether parts were replaced, or whether labor time was fair. That makes reputation powerful—and therefore tempting to game.

According to a U.S. Federal Trade Commission announcement in August 2024, the FTC finalized a rule targeting fake or false reviews—including those attributed to non-existent people or generated by AI—because deceptive reviews “pollute the marketplace” and harm honest competitors.

Can you trust star ratings alone when choosing a mechanic?

No—star ratings alone are not reliable for choosing a mechanic because (1) ratings can be artificially inflated or suppressed, (2) they hide the distribution of experiences, and (3) they often lack repair-specific proof. Next, you’ll want to treat stars as a starting filter, not a decision.

Three quick checks that beat “average stars”

Check 1: Look for a believable “middle” (3–4 star) set of reviews describing real tradeoffs: wait time, parts availability, communication style, warranty handling. Real businesses have imperfections.

Check 2: Read the lowest reviews for repeating themes (e.g., “no estimate,” “price changed,” “couldn’t explain diagnosis”). One dramatic rant is less meaningful than the same complaint across months.

Check 3: Compare the review timeline to business events: sudden spikes after a rebrand, ownership change, or big promo can be natural—but can also signal coordinated posting if the wording looks cloned.

According to the FTC’s Consumer Reviews and Testimonials Rule Q&A, which notes the rule went into effect on October 21, 2024, fake or deceptive reviews are treated as harmful marketplace pollution and can trigger civil penalties for knowing violations.

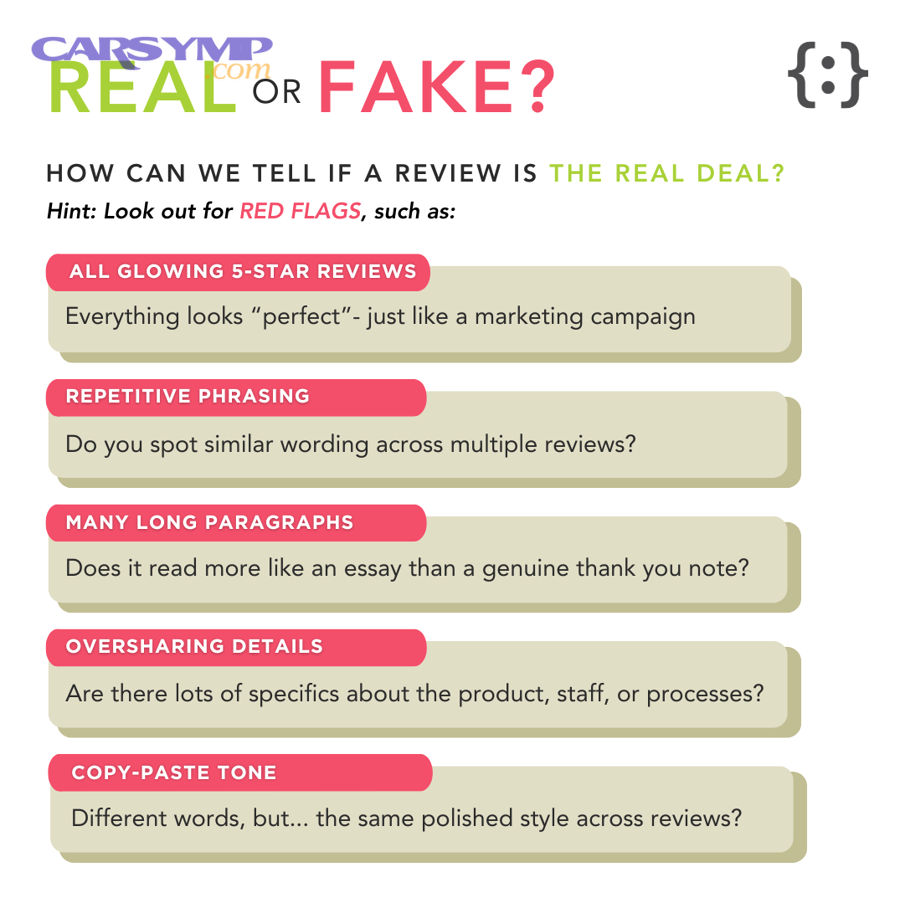

Which language patterns and detail gaps signal a fabricated review?

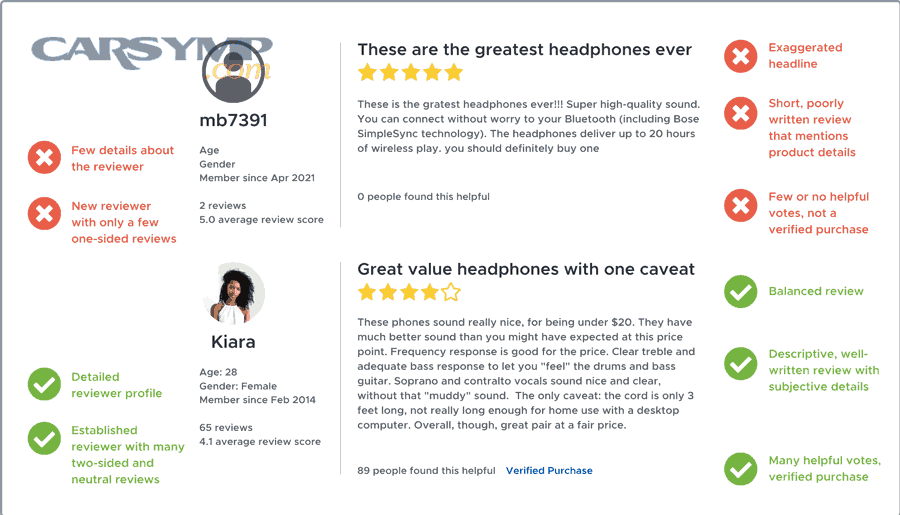

Fake reviews often show up as high confidence with low specificity: sweeping claims, generic praise, and missing repair context (symptoms, diagnostics, parts, timelines). Next, focus on whether the writing reads like an ad rather than a real service story.

Common “ad-like” signals in mechanic reviews

- Over-polished superlatives with no constraints: “perfect,” “best in the world,” “everything was amazing,” with zero mention of cost range, time, or what was fixed.

- Scripted structure: identical sentence rhythm across multiple reviewers, or repeated phrases like “highly recommended” used in the same spot.

- Brand-stuffing: repeating the full shop name unnaturally, or listing services like a menu rather than describing what happened.

- Emotion without mechanics: lots of praise/blame, very few tangible details: no symptoms, no parts, no outcome test, no warranty note.

What “real” detail looks like (without oversharing)

Real reviews usually include at least two of these: (1) the symptom (“battery light,” “grinding noise”), (2) the diagnostic step (“scan,” “voltage test”), (3) the result (“no more squeal,” “charging at 14.2V”), (4) an estimate or authorization moment, (5) a time anchor (“same day,” “two visits”).

According to Cornell researchers discussing deceptive opinion spam in July 2011, humans are “lousy” at identifying deceptive reviews, while computer methods in their test identified deceptive reviews with near 90% accuracy—highlighting that language cues can be subtle and require systematic checking.

How do reviewer profiles and posting timelines reveal coordination?

The most reliable way to detect coordinated review behavior is to check who is posting and when—because fake campaigns often leave timeline fingerprints even when the text sounds convincing. Next, combine profile checks with posting bursts.

Profile signals that deserve extra skepticism

- One-and-done accounts: a reviewer who has only one review ever, especially if it’s extreme (1-star or 5-star).

- Geographic mismatch: dozens of reviews across distant cities in short time spans, without a plausible travel pattern.

- Same-day clusters: many reviews posted on the same day or week, all with similar length and tone.

- Content mismatch: the reviewer claims complex work (“transmission rebuild”) but cannot mention any basic facts (time, cost range, symptoms).

Timeline patterns that often indicate a push

Look for “review storms” after promotions, disputes, or competitive pressure. A legitimate surge might include diverse experiences and mixed ratings; a manipulated surge often looks uniform: same rating, same claims, same wording density.

According to Yelp’s 2023 Trust & Safety report press release dated 02/28/2024, the company closed more than 278,600 user accounts for policy violations and prevented more than 40,700 potential new business pages associated with spammy behaviors—showing how widespread coordinated misuse can be on large platforms.

How can photos, invoices, and repair specifics validate a review?

You can validate a mechanic review by checking whether it contains verifiable repair anchors—photos, invoices, part names, and outcome details that would be hard to fake at scale. Next, treat proof as supportive evidence, not absolute truth (because photos can be reused).

What to look for in “evidence-rich” reviews

- Before/after details: symptom described first, repair explained second, outcome described last.

- Parts or tests mentioned naturally: “serpentine belt,” “tensioner,” “battery load test,” “smoke test,” “alignment printout.”

- Estimate authorization moment: “They called with options,” “They asked before replacing,” “They explained labor time.”

- Warranty context: “came back once,” “they rechecked at no charge,” “covered under warranty.”

Red flags even when photos exist

Be careful if many reviewers post similar-looking photos, or if photos are generic (stock images), or if the “invoice” is cropped so heavily it proves nothing. Also beware “evidence” that doesn’t match the shop’s location, branding, or services offered.

According to the FTC’s August 2024 press release, the final rule targets reviews that misrepresent they are by someone who doesn’t exist (including AI-generated) or who didn’t have actual experience—meaning “proof” should align with a real experience, not just persuasive presentation.

What cross-platform checks confirm reputation beyond one site?

The best way to reduce review manipulation risk is to triangulate: compare feedback patterns across multiple platforms and signals—because coordinated fakes rarely look consistent everywhere. Next, you’ll convert “opinions” into a reputation map.

Triangulation checklist you can do in 10 minutes

- Compare timelines: do the same strengths/weaknesses appear across platforms over months?

- Compare complaint themes: if “bait-and-switch pricing” appears only on one site, investigate why.

- Check shop responses: professional replies that address specifics can be a positive signal; copy-paste responses can be neutral or negative.

- Check business fundamentals: hours, address consistency, ownership changes, and whether the shop lists diagnostic capabilities relevant to your problem.

In your research, make a simple note: you’re not hunting perfect “auto repair reviews”; you’re testing whether the shop’s story is consistent across independent signals.

Also, don’t rely on a single “best of” list. Instead, use multiple sources and compare patterns; in practice, many drivers find that discussing “Best sites for auto repair shop reviews” is less useful than comparing whether the same strengths show up across more than one place.

According to Yelp’s 02/28/2024 press release, Yelp also reported preventing more than 23,600 inappropriate reviews from publishing using a new system leveraging LLMs to flag potentially inappropriate reviews for moderator evaluation—an example of why cross-checking matters even when platforms fight spam.

How do you use review patterns to avoid overpaying without guessing?

You can use reviews to estimate pricing fairness by combining repair-specific detail, repeatable time/cost ranges, and transparent estimate behavior—then comparing that to what you’re quoted. Next, you’ll turn qualitative comments into a “pricing expectation band.”

How to translate reviews into a fair-pricing expectation

- Collect 5–10 “evidence-rich” reviews describing similar jobs (brakes, alternator, diagnostics, AC repair).

- Extract the repeatables: how often people mention clear estimates, calls before extra work, and itemized invoices.

- Note the pricing language: “explained options,” “showed old parts,” “stuck to estimate,” “no surprise fees.”

- Build a band: if many legitimate reviews describe itemization and options, expect the same—regardless of the exact number.

Using reviews to estimate fair pricing works best when you focus on behavior (estimate discipline) rather than chasing a single dollar figure, because vehicle-to-vehicle variation is real.

According to the FTC’s rule announcement, one prohibited practice includes compensation conditioned on a review expressing a particular sentiment—so pricing “certainty” that looks too uniformly positive may reflect incentive pressure rather than true market fairness.

How should you weigh negative reviews without being manipulated?

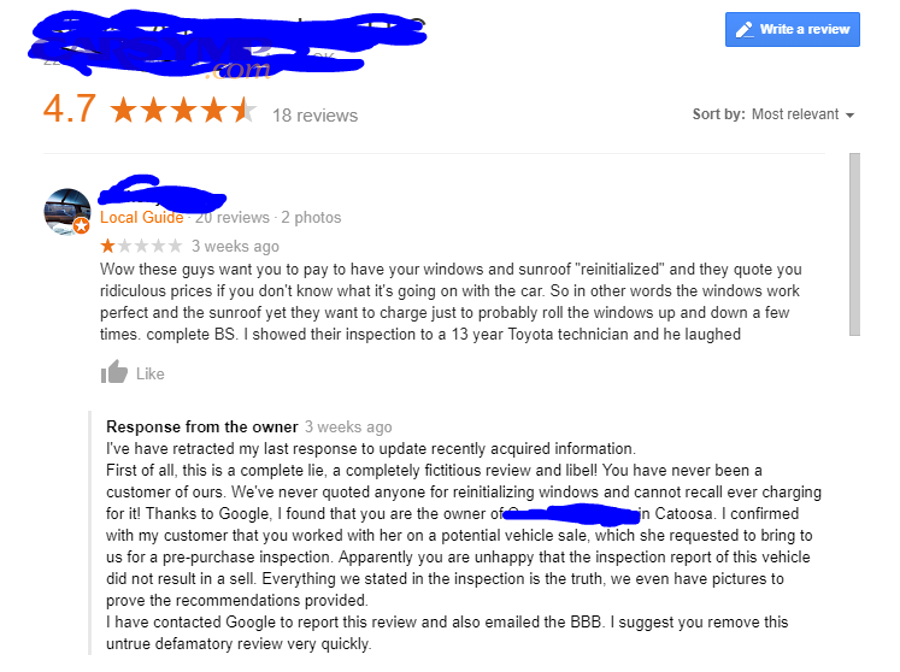

You should weigh negative reviews by separating legitimate service failures from strategic or exaggerated attacks, using at least three reasons: repeatability of the complaint, specificity of evidence, and how the shop handled resolution. Next, you’ll look for patterns instead of reacting to emotion.

A simple “signal vs noise” method

Signal usually includes: timeline (“dropped off Monday”), documentation (“estimate changed”), and outcome (“problem returned”). Noise often includes: insults, vague accusations, no repair context, and no attempt to resolve or clarify.

However, some real negative experiences are emotional—and still valid—so don’t dismiss tone alone. Instead, anchor your judgment to whether the review provides checkable facts and whether other reviewers describe similar breakdowns.

What a healthy shop response looks like

A credible response is specific without exposing private details: it invites offline resolution, references the estimate process, and explains warranty or diagnostic limitations. A suspicious response is either aggressive, dismissive, or copy-pasted across many complaints.

According to the FTC’s press release, “review suppression” through unfounded legal threats or intimidation is prohibited under the final rule—so patterns of customers claiming they were pressured to remove reviews can be a serious warning sign.

What should you do before booking to confirm the reviews are real?

The most effective pre-booking move is to ask three confirmation questions that only a transparent shop answers clearly: estimate policy, diagnostic process, and warranty/return handling. Next, match their answers to what consistent reviewers described.

Three questions that expose “review-polished” shops

- “How do you handle estimates and approvals?” A trustworthy shop explains authorization steps and whether estimates are written and itemized.

- “What diagnostic steps do you typically use for this symptom?” You’re not testing technical brilliance; you’re testing willingness to explain process.

- “If the issue comes back, what’s the warranty or recheck policy?” Legit shops describe a process, not a vibe.

What to listen for in their answers

Listen for clarity, boundaries, and options. Honest shops say things like “it depends, but here’s how we decide,” and explain how parts availability and inspection results affect timing and cost. Overconfident guarantees with no conditions can be marketing, not mechanics.

According to the FTC’s Q&A on the Consumer Reviews and Testimonials Rule, the rule authorizes civil penalties for knowing violations and targets deceptive conduct involving reviews and testimonials—so a shop’s internal culture around transparency is increasingly a compliance issue, not just customer service.

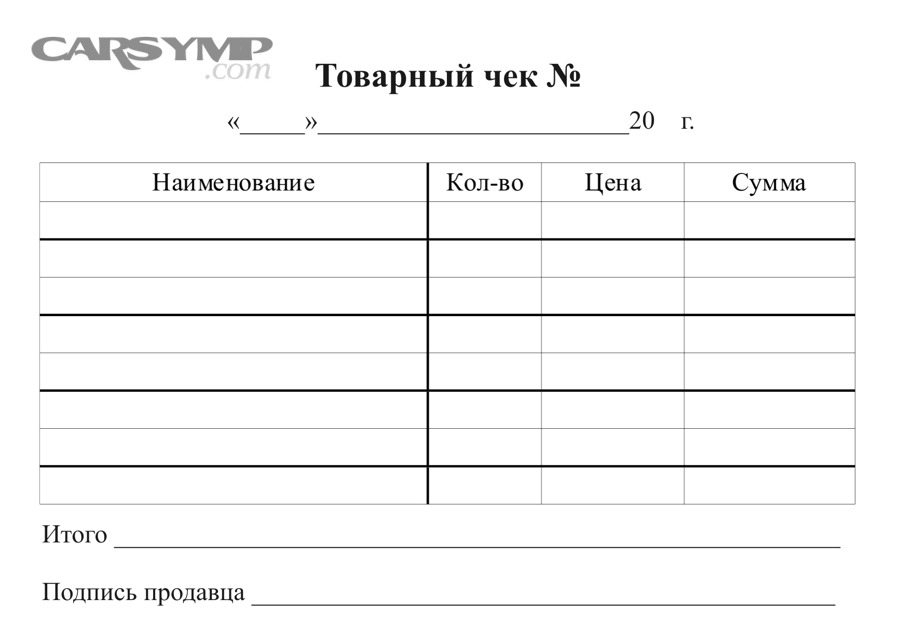

Checklist table: how to spot fake mechanic reviews fast

This table contains a practical “red flag vs green flag” checklist so you can scan reviews quickly without overthinking. It helps you convert scattered signals into a consistent decision rule.

| What you see | Likely meaning | What to do |

|---|---|---|

| Many 5-star reviews in a short burst with similar wording | Possible coordinated campaign | Check reviewer profiles and cross-platform timelines |

| Reviews praise “honesty” but never mention estimate or authorization | Generic persuasion without proof | Look for itemized invoice language and repair specifics |

| Multiple mid-range reviews mention clear calls before extra work | Consistent estimate discipline | Ask the shop to confirm their estimate policy before booking |

| 1-star attacks with no dates, no service, no repair details | Low-evidence negativity (could be fake) | Search for repeating themes across months and sites |

| Negative reviews cite the same issue (price changes, misdiagnosis) repeatedly | Potential systemic process problem | Ask targeted questions; consider alternatives if pattern persists |

Contextual border: when “review detective work” ends and risk management begins

At this point, you’ve learned to spot text and behavior patterns; next, the expanded context is how platforms and regulators respond—so you know what to report, what to ignore, and what to treat as a high-risk signal.

How do platforms and laws reduce fake review harm—and what should you do?

Platforms and laws reduce fake review harm by combining detection systems, moderation, and penalties for knowing misconduct—yet no system is perfect, so consumers still need a verification habit. Next, use the safeguards as support, not as a substitute for judgment.

What the FTC rule changes for fake reviews (including AI)

The FTC’s final rule targets fake or false reviews that misrepresent real experience, including AI-generated reviews, and allows civil penalties for knowing violations. That matters because it discourages businesses from “buying credibility” as a marketing tactic.

What Yelp’s Trust & Safety actions suggest about scale

Large platforms publicly report enforcement actions—like account closures, blocked business pages, and prevented reviews—because review systems are a constant arms race. Use those reports as proof that “some reviews are filtered,” not proof that any one shop is guilty.

How to report suspicious review behavior responsibly

Report patterns, not opinions: link clusters of similar reviews, suspicious profiles, and timing anomalies. Avoid accusing individuals; focus on observable behavior that moderators can evaluate without guesswork.

How to build a long-term “trust stack” beyond reviews

Combine reviews with fundamentals: clear written estimates, itemized invoices, transparent diagnostics, warranty handling, and consistent communication. When those align with stable reputation patterns, you can book confidently even if the internet is messy.

Final note: If you’re comparing shops and feel overwhelmed, write down your “top 3 evidence points” from each place: a repair-specific review, a neutral/mid-range review, and a negative review with details. Then choose the shop whose story stays consistent across all three.