Performing a leak check and pressure test after installation involves systematically pressurizing the system to specified levels, holding that pressure for a designated duration, and using detection methods like bubble solutions or electronic sensors to identify any leaks before commissioning. This critical quality assurance step ensures system integrity, prevents hazardous failures, and confirms compliance with manufacturer specifications and building codes across HVAC, plumbing, and industrial piping applications. The process typically requires specialized equipment including pressure regulators, calibrated gauges, test media (nitrogen or water), and leak detection tools, with specific procedures varying based on system type and regulatory requirements.

Understanding the proper equipment selection is essential before beginning any pressure test. The tools you need range from basic soap solutions and pressure gauges to advanced digital manifolds with temperature compensation for HVAC systems, hydrostatic test pumps for plumbing installations, and differential pressure transducers for industrial applications. Each system type demands specific equipment configurations, and selecting inappropriate tools can compromise test accuracy or create safety hazards during pressurization.

Establishing the correct test pressure levels and duration prevents both false failures and undetected leaks. HVAC systems typically require 200-600 psi nitrogen pressure held for 30 minutes to 48 hours depending on application scale, while plumbing systems generally test at 150 psi for water lines with hold times ranging from 2 to 24 hours. Industrial piping follows ASME code requirements that mandate test pressures of 1.5 times the design pressure for hydrostatic tests or 1.1 times for pneumatic tests, with minimum hold periods determined by system volume and stored energy calculations.

When systems fail pressure tests, systematic troubleshooting becomes necessary to locate and repair leaks efficiently. Next, let’s explore the foundational concepts that make leak checking and pressure testing essential practices across all installation projects.

What Is a Leak Check and Pressure Test After Installation?

A leak check and pressure test after installation are two complementary procedures that verify system integrity by pressurizing newly installed pipes, vessels, or equipment to detect any unwanted fluid or gas escape before the system enters service. The pressure test quantitatively measures whether a system can maintain specified pressure over time, while the leak check qualitatively locates specific points where leaks occur using visual, auditory, or electronic detection methods. These procedures apply to HVAC refrigerant lines, potable water plumbing, natural gas piping, industrial process systems, and any pressurized installation where containment integrity directly affects safety, efficiency, and regulatory compliance.

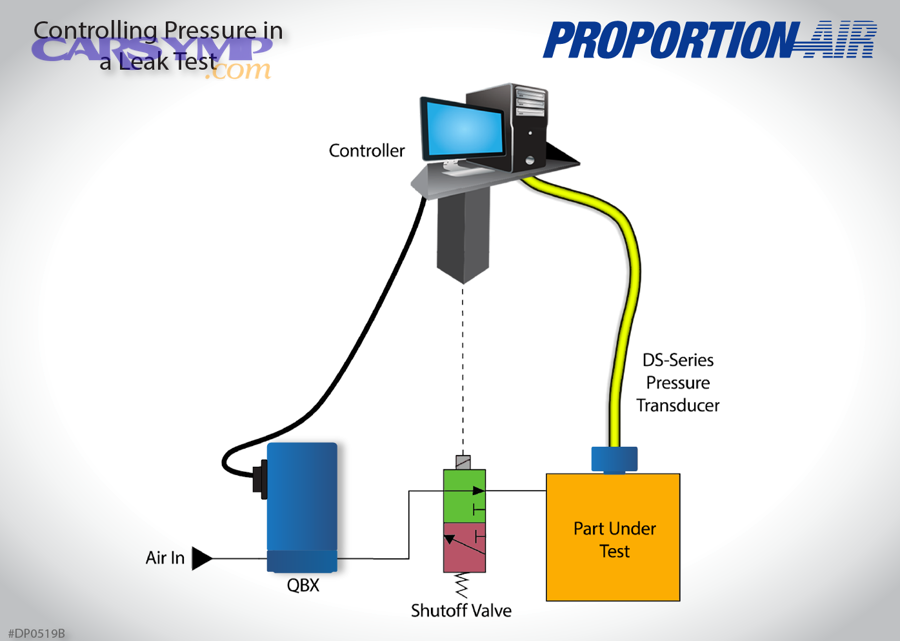

Specifically, the pressure test serves as the initial verification step that establishes whether the system as a whole meets tightness requirements. Technicians pressurize the system using appropriate test media—typically nitrogen for HVAC and gas systems, water for plumbing, or compressed air for certain applications—then monitor pressure readings over a specified duration. A system passes when pressure loss remains within acceptable limits defined by code requirements or manufacturer specifications, indicating no significant leaks exist. The test quantifies system integrity through measurable pressure decay rates that correlate to allowable leakage volumes.

The leak check follows or accompanies pressure testing to pinpoint exact leak locations when pressure loss occurs. Technicians apply soap solutions that form bubbles at leak points, use ultrasonic detectors that hear high-frequency sounds from escaping gas, or employ electronic sensors that detect specific gases like refrigerant or tracer compounds. This qualitative assessment enables targeted repairs rather than wholesale system replacement, making it an indispensable troubleshooting tool.

These tests are mandatory after new installations to verify workmanship quality, following repairs to confirm leak remediation, and after modifications that could compromise system integrity. Moreover, the procedures protect against multiple failure modes simultaneously. In HVAC systems, undetected refrigerant leaks cause efficiency losses, environmental harm through ozone-depleting emissions, and eventual compressor failure. Plumbing leaks create water damage, mold growth, and structural deterioration. Gas piping leaks present explosion hazards, carbon monoxide poisoning risks, and regulatory violations with severe penalties.

Industries requiring these tests include residential and commercial HVAC, municipal water systems, natural gas distribution, pharmaceutical manufacturing, food processing, chemical plants, and semiconductor fabrication. Each application maintains specific testing protocols adapted to the fluid type, pressure class, and consequence of failure, but all share the fundamental principle of verifying containment before operational use.

Why Should You Perform Leak Checks and Pressure Tests After Installation?

You should perform leak checks and pressure tests after installation because they prevent catastrophic safety failures, ensure regulatory compliance, and protect against expensive property damage by detecting system weaknesses before pressurized fluids enter service. These procedures identify installation defects like improperly tightened connections, damaged gaskets, defective welds, or component flaws that would otherwise remain hidden until operational pressures cause sudden failures. Testing establishes a documented baseline of system integrity that satisfies code requirements, protects warranties, and provides legal evidence of due diligence in construction quality.

To begin, safety considerations make post-installation testing non-negotiable for systems containing hazardous substances. Natural gas leaks create explosion risks that have caused numerous building collapses and fatalities when undetected during initial commissioning. Refrigerant leaks in HVAC systems release compounds that can cause asphyxiation in enclosed spaces or environmental damage through ozone depletion. Industrial process piping containing corrosive chemicals, high-temperature steam, or toxic substances poses immediate danger to workers and surrounding communities if containment fails. Pressure testing simulates worst-case operating conditions under controlled circumstances when personnel can evacuate the area, whereas discovering leaks during operation may provide no warning before catastrophic release.

Code compliance represents another compelling reason for systematic testing. The International Mechanical Code (IMC), International Plumbing Code (IPC), ASME B31.3 for process piping, and AWWA standards for water systems all mandate pressure testing at specified levels and durations. Building inspectors refuse to approve installations lacking documented test results, halting project completion and delaying occupancy. Insurance companies may deny claims for damage resulting from untested systems, citing contractor negligence. Professional licensing boards can sanction technicians who skip required testing procedures, jeopardizing their ability to work.

Economic protection through early defect detection provides immediate return on testing investment. Water damage from a failed plumbing joint can cost tens of thousands in repairs to flooring, drywall, and structural elements, plus business interruption losses and mold remediation expenses. HVAC refrigerant replacement costs $50-$150 per pound depending on the refrigerant type, and slow leaks can deplete an entire charge over months while the system runs inefficiently. Industrial facilities may face production shutdowns costing millions per day when process piping fails unexpectedly. Detecting these issues during testing costs only the technician’s time and minor repair materials—a fraction of operational failure expenses.

System efficiency and longevity improve when installations begin service leak-free. HVAC systems with refrigerant leaks run continuously trying to maintain temperature, increasing energy consumption by 20-50% while providing insufficient cooling or heating. Compressors working with low refrigerant levels overheat and fail prematurely, requiring $1,500-$8,000 replacements. Water systems with leaks waste resources, increase utility bills, and create moisture conditions that accelerate pipe corrosion. Pressure testing ensures systems operate at designed efficiency from day one.

Warranty protection depends on documented testing in most equipment contracts. Manufacturers void warranties when installers fail to perform specified pressure tests before charging refrigerant or introducing process fluids. This protection clause shifts liability for installation defects back to contractors who skipped verification steps, leaving building owners without recourse for expensive equipment failures. Proper testing documentation preserves warranty coverage and establishes clear responsibility boundaries between manufacturers and installers.

According to the National Institute of Standards and Technology (NIST), properly conducted pressure testing reduces post-installation callbacks by 76% compared to installations without systematic verification procedures. This data demonstrates that testing investment pays immediate dividends through reduced warranty work and enhanced customer satisfaction.

What Equipment Do You Need for Leak Check and Pressure Testing?

You need pressure regulation devices, calibrated measurement instruments, appropriate test media, leak detection tools, safety equipment, and documentation systems to perform comprehensive leak checks and pressure tests after installation. The specific equipment configuration varies by system type—HVAC installations require nitrogen cylinders with high-pressure regulators and refrigerant-specific leak detectors, plumbing systems need hydrostatic test pumps and water supplies, while industrial applications demand differential pressure transducers and tracer gas detection equipment. Regardless of application, all testing setups must include pressure relief protection, accurate monitoring instruments, and systematic methods to verify results and maintain test records.

More specifically, the equipment selection process begins with understanding test media requirements and pressure ranges for your specific application. Pressure regulation equipment forms the foundation of any test setup, controlling the rate of pressurization and maintaining steady test pressure throughout the hold period. Safety equipment protects personnel from stored energy hazards inherent in pressurized systems, particularly during pneumatic testing where gas compression creates explosive release potential if containment fails.

What Are the Essential Tools for HVAC System Testing?

HVAC system pressure testing requires nitrogen cylinders with high-pressure regulators rated for 200-800 psi, digital manifold gauge sets with temperature compensation capabilities, vacuum pumps for system evacuation, and refrigerant-specific electronic leak detectors calibrated to detect halogenated compounds.

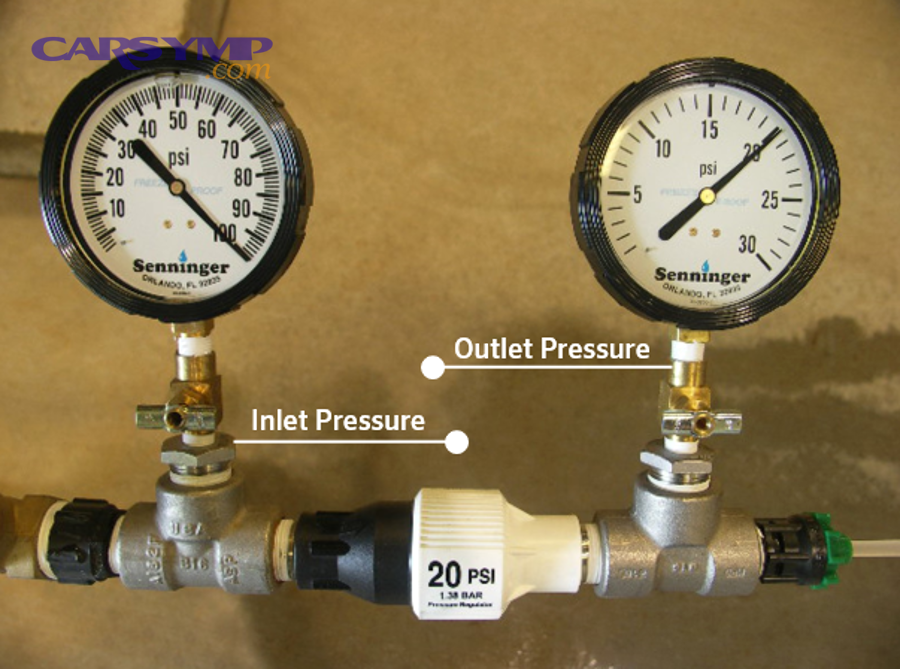

The nitrogen regulator serves as the primary pressure control device, featuring a diaphragm mechanism that reduces cylinder pressure (typically 2,000-2,500 psi when full) to controllable test pressures. High-quality regulators include dual gauges showing both cylinder pressure and outlet pressure, plus a pressure relief valve that vents automatically if the regulator fails. Regulators must connect to nitrogen cylinders via CGA-580 fittings designed specifically for inert gases, preventing accidental connection to oxygen or other incompatible cylinders. Never use regulators interchangeably between gas types, as doing so risks contamination and dangerous chemical reactions.

Digital manifolds represent significant advancement over traditional analog gauge sets, providing precise pressure readings with 0.1 psi resolution and built-in temperature compensation that adjusts for ambient temperature changes during extended tests. Models like the Fieldpiece SMAN series or Testo 550 series connect to smartphone apps that log pressure readings continuously, calculate pressure decay rates automatically, and generate test reports documenting system integrity. These instruments typically include multiple sensor ports allowing simultaneous monitoring of high-side and low-side pressures in refrigeration systems. The temperature compensation feature proves critical for commercial installations where 24-48 hour test durations span day-night temperature cycles that naturally affect gas pressure according to Gay-Lussac’s law.

Vacuum pumps become necessary when testing procedures require evacuating systems before pressure testing or when leak-checking through vacuum decay methods. Two-stage rotary vane pumps rated to achieve 50 microns or less ensure complete moisture and non-condensable gas removal. Vacuum gauges, either thermocouple or capacitance manometer types, measure deep vacuum levels that analog gauges cannot resolve. The vacuum step prevents moisture contamination that causes acid formation in refrigerant systems and removes air that could mask small leaks during pressure testing.

Electronic leak detectors designed for HVAC applications use heated diode or infrared sensor technology to detect halogenated refrigerants at concentrations as low as 0.1 ounces per year. These devices feature adjustable sensitivity allowing technicians to first locate general leak areas at lower sensitivity, then pinpoint exact leak sources at maximum sensitivity. The probe wand should be moved slowly at 1-2 inches per second around all joints, valve stems, and brazed connections, as faster movement rates reduce detection accuracy. Battery-powered models with headphone jacks enable leak detection in noisy environments where audible alarms prove ineffective.

Core removal tools allow extracting Schrader valve cores before testing, increasing flow rates during pressurization and preventing valve seat damage from repeated high-pressure cycling. Protective valve caps prevent debris contamination during the pressurization and testing phases. Charging hoses rated for 600 psi working pressure with 1/4″ or 3/8″ SAE fittings connect test equipment to system service ports, and brass ball valves installed inline provide positive shutoff capability to isolate the system once test pressure is reached.

What Are the Essential Tools for Plumbing and Water Line Testing?

Plumbing and water line pressure testing requires hydrostatic test pumps capable of generating 200-300 psi, calibrated Bourdon tube pressure gauges with 0-300 psi range, water supply connections, system drainage equipment, and air compressors for pneumatic testing when water use is contraindicated.

Hydrostatic test pumps, either manual lever-operated or electric motor-driven models, pressurize water within closed plumbing systems to specified test pressures. Manual pumps like the Reed HPP prove sufficient for residential installations, generating 300 psi through mechanical advantage while providing tactile feedback on pressurization effort. Electric test pumps automate the pressurization process for large commercial systems where manual pumping would exhaust the operator, featuring pressure switches that automatically maintain target pressure throughout the test duration. Both pump types include pressure gauges directly mounted on the pump assembly, though external gauges at remote system locations provide more accurate readings unaffected by pressure losses through hoses and fittings.

Calibrated Bourdon tube pressure gauges with 2.5″ or 4″ dial faces provide accurate pressure measurement throughout the test. Gauge accuracy specifications of ±1% full scale ensure reliability, and gauges require annual calibration to maintain certification for code compliance documentation. The pressure range should exceed test pressure by approximately 50% for optimal accuracy—testing at 150 psi works best with a 0-300 psi gauge rather than a 0-1000 psi gauge where the reading falls in the low quarter-scale where accuracy degrades. Liquid-filled gauges contain glycerin or silicone that dampens needle flutter caused by pressure pulsations, making readings easier to observe during pressurization.

Water supply requires clean potable water introduced through temporary connections at convenient system locations. Garden hose adapters, cam-lock fittings, or specialized test port fittings enable connection between the test pump and the system. The water supply must be free from debris that could clog small orifices or damage valve seats, necessitating inline filters when using construction site water sources. Temperature considerations become important in cold climates where testing must occur before building heating is operational—water-based testing risks freeze damage if ambient temperatures drop below 32°F before draining completes.

Air compressors rated for 150-200 psi continuous duty provide pneumatic testing capability when hydrostatic testing is impractical due to weight limitations on structural supports, freezing concerns, or water-sensitive materials within the system. However, pneumatic testing requires extreme caution due to stored energy hazards. A system volume of only 10 cubic feet at 150 psi contains approximately 100,000 foot-pounds of stored energy—equivalent to 25 sticks of dynamite—that releases explosively if containment fails. Consequently, building codes restrict pneumatic testing and require specific safety protocols including personnel exclusion zones and gradual pressurization in 25 psi increments with hold periods at each stage.

Drainage equipment including sump pumps, discharge hoses, and adequate floor drains or exterior disposal points become necessary for removing test water after successful test completion. Large commercial systems may contain thousands of gallons requiring hours to drain completely. The drainage plan must account for local regulations prohibiting discharge of chlorinated water to storm sewers or natural waterways, potentially requiring dechlorination treatment before disposal.

System isolation equipment includes brass gate valves, ball valves, or specialized test plugs that isolate the section under test from adjoining systems. Inflatable test balls inserted into pipe ends provide temporary sealing for open-ended sections, expanding when pressurized to create watertight barriers. All isolation devices must withstand test pressure plus a safety margin without leaking, as isolation point failures compromise the entire test validity.

What Are the Essential Tools for Industrial Piping Systems?

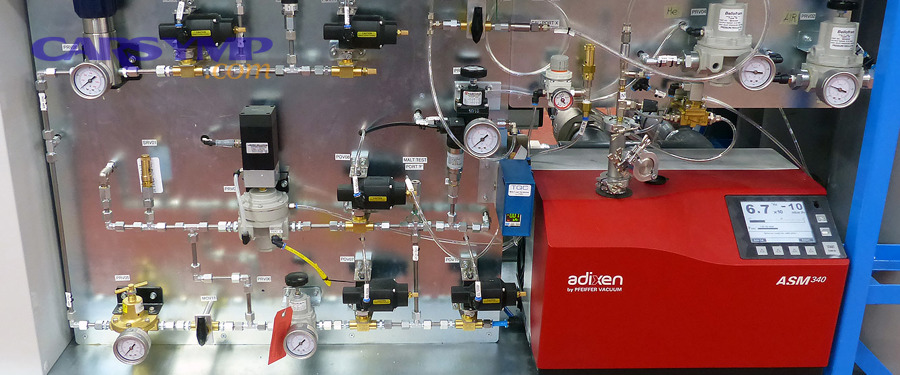

Industrial piping system testing requires high-pressure nitrogen systems with flow control manifolds, differential pressure transducers for sensitive leak detection, tracer gas equipment for critical applications, remote monitoring systems for large installations, and comprehensive safety equipment including pressure relief assemblies and exclusion zone barriers.

High-pressure nitrogen systems for industrial applications utilize manifolded cylinder banks that maintain continuous gas supply throughout extended tests on large-volume systems. Individual cylinders containing 280 cubic feet at 2,640 psi provide insufficient capacity for systems exceeding 1,000 cubic feet, necessitating manifolds connecting 4-20 cylinders to a common supply header with a master pressure regulator. These manifolds often incorporate automatic switchover systems that transition between primary and reserve cylinder banks when pressure drops below setpoints, ensuring uninterrupted testing even during overnight holds. Flow control valves downstream of the regulator enable precise pressurization rates, critical for large piping systems where rapid pressurization creates damaging pressure transients.

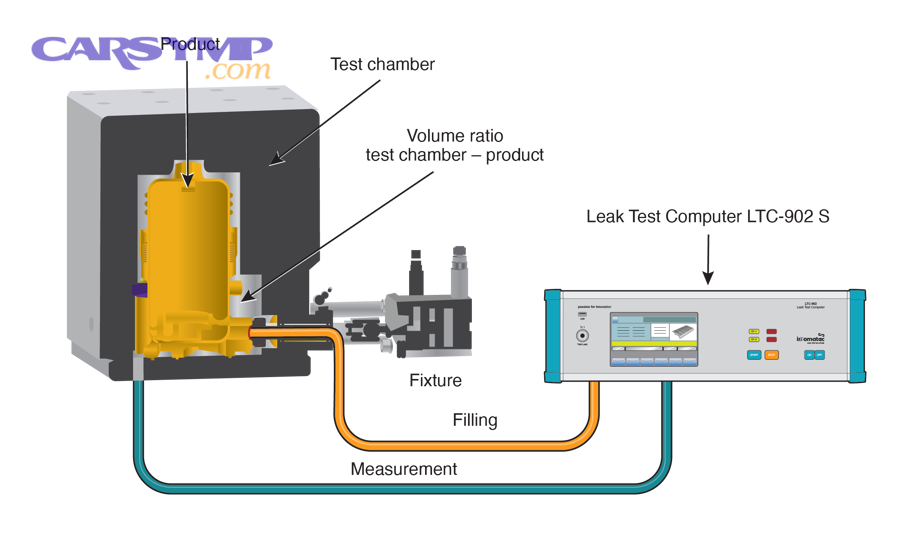

Differential pressure transducers provide measurement sensitivity orders of magnitude beyond standard pressure gauges, detecting leaks as small as 0.001 standard cubic centimeters per second. These instruments compare pressure between a sealed reference volume and the test volume, measuring only the differential pressure change caused by leakage. The reference volume compensates for environmental temperature fluctuations that affect both volumes equally, eliminating false failures from thermal effects. Transducers with digital outputs connect to data acquisition systems that log readings at intervals as short as one second, creating detailed pressure decay curves that characterize leak rates quantitatively.

Tracer gas equipment becomes necessary for critical applications where helium mass spectrometry or hydrogen sensing provides detection sensitivity 1,000 times greater than pressure decay methods. Helium systems pressurize the test piece with helium-nitrogen mixtures, then use mass spectrometers to detect helium molecules leaking through defects as small as 10^-9 standard cubic centimeters per second. The mass spectrometer’s vacuum chamber separates helium from other gases by molecular weight, generating proportional signals for leak rate quantification. Hydrogen tracer systems offer similar sensitivity at lower equipment cost, using electrochemical or thermal conductivity sensors to detect hydrogen concentrations in swept air samples collected around potential leak sites.

Remote monitoring systems support testing operations on large installations spanning multiple building areas or outdoor piping networks. Wireless pressure transmitters positioned at strategic system locations communicate readings to central monitoring stations, eliminating the need for technicians to walk miles of piping checking local gauges. These systems incorporate alarming features that notify operators immediately when pressure drops below acceptance criteria, enabling rapid response to developing leaks. Data logging capabilities create permanent test records satisfying quality assurance and regulatory documentation requirements.

Safety equipment for industrial pressure testing includes engineered pressure relief assemblies, personnel barriers, warning signage, and detection equipment for hazardous test media. Relief assemblies consist of certified rupture disks or pressure relief valves sized to vent system volume safely if test pressure exceeds design limits, preventing catastrophic equipment failure. The relief vent must discharge to a safe location away from personnel and ignition sources. Barriers made from chain-link fencing or caution tape establish exclusion zones preventing personnel access during testing, with zone size calculated based on stored energy and potential projectile range. Warning signs meeting ANSI Z535 standards communicate hazards clearly in multiple languages.

According to ASME B31.3 process piping code requirements, pneumatic test systems exceeding 100,000 foot-pounds of stored energy must undergo formal hazard analysis and implement documented safety procedures before testing begins.

How Do You Perform a Pressure Test After Installation?

You perform a pressure test after installation by isolating the system, gradually pressurizing to the specified test pressure using appropriate media, holding that pressure for a code-required duration while monitoring for pressure loss, and documenting the results before depressurizing—with specific procedures varying by system type. HVAC systems typically use dry nitrogen pressurized in 100 psi increments to 200-600 psi and held for 30 minutes to 48 hours depending on scale, while plumbing systems undergo hydrostatic testing at 150 psi for 2-24 hours, and industrial piping follows ASME code requirements at 1.5 times design pressure for a minimum 10-minute hold. All procedures require preliminary inspection to verify proper installation, systematic pressurization following safety protocols, continuous monitoring for leaks during the hold period, and proper documentation meeting regulatory requirements.

However, successful pressure testing begins well before connecting test equipment. Preliminary system inspection verifies completion of all installation work, confirms proper support and restraint to prevent movement under pressure, checks that all joints are visible and accessible for leak observation, and ensures no test-sensitive equipment remains in the system. Components like pumps, compressors, control valves, and instrumentation often cannot tolerate test pressures and require isolation or removal before testing begins. Temporary blinds or spectacle flanges installed at equipment boundaries provide positive isolation, while portable plugs seal open-ended piping sections.

System purging removes air and moisture that could compromise test accuracy or damage components when pressurized. Nitrogen purging for HVAC systems involves flowing nitrogen through all sections while venting at the far end, continuing until oxygen sensors confirm less than 1% oxygen content. This step prevents oxidation of copper surfaces during brazing heat and eliminates moisture that freezes at expansion devices or reacts with refrigerant to form acids. Water system filling requires opening high-point vents systematically as water level rises, allowing trapped air to escape rather than compress into pockets that create false pressure readings.

The pressurization protocol follows a staged approach rather than rapid pressurization to target pressure. Initial pressurization to 25-50 psi allows detection of gross leaks at low stored energy before reaching dangerous pressure levels. This “snub test” protects against catastrophic failure when major installation errors exist like missing gaskets, uncapped pipe ends, or improperly assembled joints. After confirming the system holds initial pressure, continue pressurizing in 50-100 psi increments, pausing briefly at each stage to observe for new leaks that appear as stress increases.

The hold period begins once target test pressure is reached and the system isolates from the pressurization source. Isolation typically involves closing valves on charging hoses and removing hoses from service ports to verify the system maintains pressure independently. During the hold period, record initial pressure and temperature, then monitor both parameters at regular intervals—every 5 minutes for short tests, hourly for extended tests. Temperature changes affect gas pressure according to Gay-Lussac’s law: P₁/T₁ = P₂/T₂, where temperatures use absolute scale (Rankine or Kelvin). A 10°F temperature drop causes approximately 2% pressure reduction in a sealed gas volume, which appears as leakage if not compensated.

What Are the Step-by-Step Procedures for HVAC System Pressure Testing?

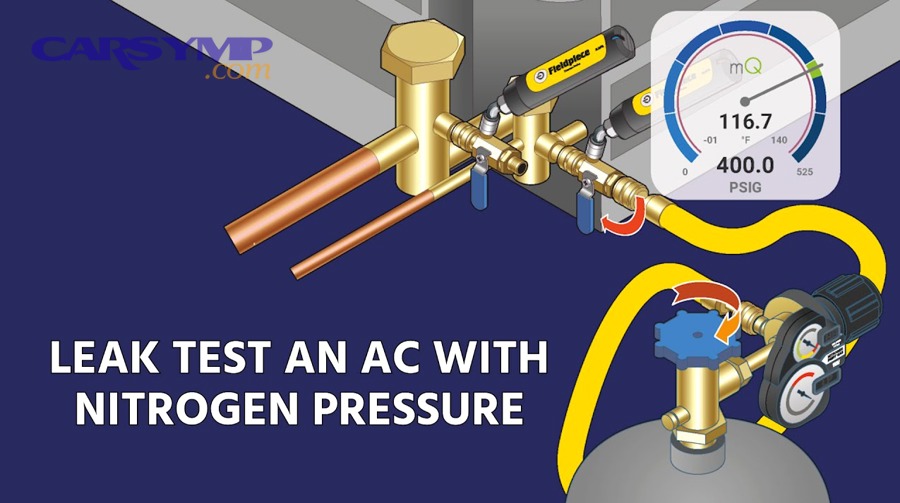

HVAC system pressure testing follows an eight-step process: purge the system with dry nitrogen, remove valve cores, connect the pressure regulator and digital manifold, pressurize gradually to target pressure (200-600 psi based on application), isolate the system and begin the hold period (30-60 minutes residential, 24-48 hours commercial), monitor for temperature-compensated pressure loss, conduct leak checks if pressure drops, and document results before refrigerant charging.

Step 1: System purging with dry nitrogen removes air and moisture before testing begins. Connect a nitrogen cylinder with regulator to the liquid line service port and a purge hose from the suction service port to an exterior vent location. Open system valves fully and flow nitrogen at 3-5 psi for 5-10 minutes, creating a gentle positive pressure that displaces air without excessive velocity that entrains moisture from internal surfaces. This low-pressure purge proves more effective than high-pressure blowing which can force moisture deeper into system crevices. Verify purge completion using oxygen sensors—readings below 1% oxygen confirm adequate nitrogen displacement.

Step 2: Valve core removal increases flow rates during pressurization and prevents damage to Schrader valve seats from high-pressure cycling. Use a proper core removal tool that captures cores without releasing excessive nitrogen, and store removed cores in clean containers to prevent contamination. Some technicians skip this step on small residential systems, but valve seat damage from repeated high-pressure testing leads to persistent slow leaks that contaminate refrigerant charges and compromise system performance.

Step 3: Equipment connection requires attaching the nitrogen regulator to the cylinder, connecting a charging hose from the regulator to one service port, and attaching a digital manifold to monitor pressure. Position the manifold at the opposite service port from the nitrogen connection to ensure readings reflect system pressure rather than supply pressure. Enable temperature compensation features on digital manifolds by attaching temperature probes to refrigerant lines or placing probes in ambient air near the installation. Verify all connections are tight before pressurization begins—loose fittings at service ports or manifold connections appear as system leaks during testing.

Step 4: Gradual pressurization protects components from shock loads while enabling early detection of major leaks. Begin flowing nitrogen slowly, watching manifold readings climb steadily. Pause at 100 psi for one minute, observing for rapid pressure loss indicating gross leaks. If pressure holds, continue to 200 psi and pause again. This staged approach reduces safety risks and prevents wasted nitrogen when major installation defects exist. Continue pressurizing in 100 psi increments to the target pressure—typically 250-300 psi for residential split systems, 400-450 psi for commercial rooftop units, and 500-600 psi for industrial refrigeration. Never exceed equipment pressure ratings marked on nameplates or manufacturers’ specifications.

Step 5: System isolation begins by closing the ball valve on the charging hose, then removing the hose from the service port and immediately installing a service port cap to prevent nitrogen escape. The system now stands alone without external pressure support, revealing any leakage through pressure decay. Record initial pressure, temperature, and time as the baseline for pressure loss calculations. Modern digital manifolds timestamp readings automatically and calculate decay rates continuously.

Step 6: Monitoring duration depends on system size and criticality. Residential systems typically require 30-60 minute holds sufficient to detect leaks exceeding 0.5 psi per hour. Commercial systems need 4-8 hour holds to verify installations before costly refrigerant charging. Large industrial installations mandate 24-48 hour tests ensuring leak rates remain below stringent limits—often less than 0.1 psi per hour adjusted for temperature. During monitoring, check pressure readings at regular intervals and note ambient temperature changes. Calculate temperature-compensated pressure using the formula: P_compensated = P_measured × (T_initial / T_measured), where temperature uses absolute scale (°R = °F + 459.67).

Step 7: Leak detection proceeds immediately if pressure loss exceeds acceptance criteria. Apply soap solution systematically to all brazed joints, flare connections, threaded fittings, and valve stems, observing for bubble formation indicating leakage. Electronic leak detectors supplement visual inspection in hard-to-access locations, but note that nitrogen testing prevents refrigerant detector use—some installations add trace amounts of refrigerant to nitrogen (5-10%) enabling electronic detection while maintaining purge gas benefits.

Step 8: Documentation requirements include recording test pressure, duration, temperature conditions, pressure loss measurement, leak locations found, and pass/fail status. Many digital manifolds generate PDF reports automatically through smartphone apps, satisfying code requirements for certified test records. Include photographs documenting gauge readings and leak test results. This documentation protects against warranty disputes and liability claims, proving due diligence in installation verification.

According to the Air Conditioning Contractors of America (ACCA), proper nitrogen pressure testing reduces refrigerant leakage rates by 92% compared to installations using only vacuum testing without pressure verification, demonstrating the procedure’s effectiveness in ensuring long-term system integrity.

What Are the Step-by-Step Procedures for Plumbing and Water Line Testing?

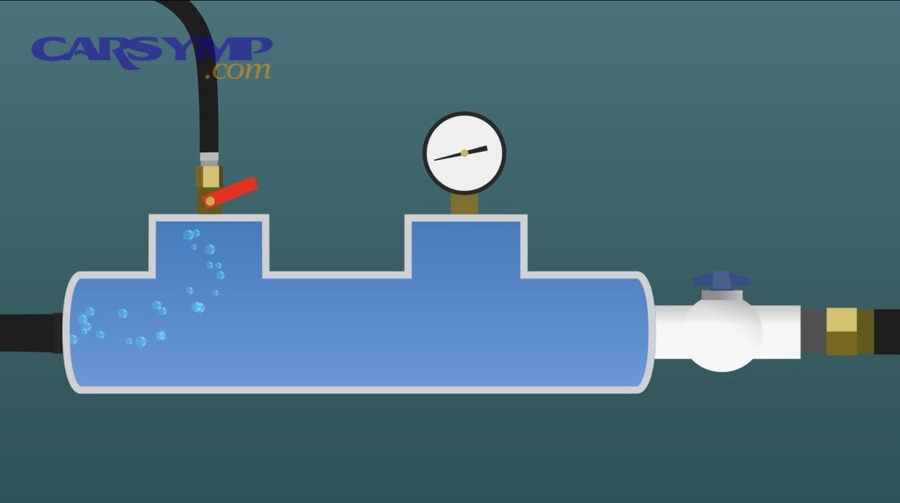

Plumbing and water line pressure testing involves seven key steps: isolate the test section and verify all endpoints are capped or valved, drain residual water and purge air completely, connect the test pump and calibrated gauge, fill the system slowly while venting air from high points, pressurize to specification (typically 150 psi), hold pressure for the required duration (2-24 hours) while monitoring for decay, and inspect all connections during the hold period to locate any leaks.

Step 1: System isolation defines the test boundaries by closing valves at the section limits or installing temporary caps and plugs at open pipe ends. For new construction, ball valves separate the new installation from existing systems, while test plugs seal stub-outs for future fixtures. Verify isolation valve integrity before proceeding—a leaking isolation valve allows pressure loss to adjoining systems, creating false test failures. Test section size balances practicality against troubleshooting efficiency—excessively large test sections make leak location difficult, while testing small sections consumes unnecessary time. Residential installations typically test entire floors or branches as units, commercial buildings test by zone or riser, and municipal systems test by block sections.

Step 2: Drainage removes construction debris, pipe joint compound, and residual water that could interfere with test water quality or mask small leaks. Open drain valves at system low points, allowing gravity drainage to remove bulk water. Use compressed air to blow remaining water from horizontal runs and low-point traps. Complete drainage proves critical for pneumatic testing where water in the lines creates pressure measurement errors and dangerous slug flow hazards if water accelerates through piping during depressurization.

Step 3: Equipment connection requires attaching the test pump to a convenient system access point, typically a hose bibb, cleanout, or dedicated test port. Thread adapters carefully to avoid cross-threading brass fittings, and use thread sealant rated for potable water if the installation will later carry drinking water. Mount the pressure gauge at a visible location near the pump or at a remote high point in the system where operators can observe readings throughout the test. Ensure the gauge valve remains closed during filling to prevent water entry into the Bourdon tube mechanism, opening only after pressurization begins.

Step 4: System filling introduces clean water slowly to allow air escape through vent points. Begin with all high-point vents open, starting water flow at a rate that produces gentle turbulence without water hammer—typically 2-5 gallons per minute depending on pipe size. Watch for water discharge from vents indicating that section is full, then close vents systematically as filling progresses. Inadequate air venting creates compressible air pockets that absorb pressure like shock absorbers, preventing accurate pressure readings and masking leaks. Large systems may require hours to fill completely, and operators must verify water appears at the highest vent before considering filling complete. Some installations incorporate automatic air vents that close when water reaches them, though manual vents provide more reliable verification.

Step 5: Pressurization begins after verifying complete water filling and all vents closed. Pump steadily, watching the gauge climb toward test pressure. Pumping resistance increases significantly as target pressure approaches—manual pumps require substantial force for the final 50 psi. Listen for hissing sounds indicating large leaks that should be corrected before reaching full test pressure, saving water and preventing damage to surrounding areas. Once reaching test pressure (typically 150 psi for residential, 200 psi for commercial), stop pumping and observe the gauge for several minutes. Slight pressure drop is normal as piping material stretches slightly under initial pressurization—this “pipe creep” occurs once and doesn’t indicate leakage.

Step 6: Hold period duration varies by code jurisdiction and system size. The International Plumbing Code requires minimum 15-minute hold at 150 psi for residential, while many jurisdictions mandate 2-hour minimum tests. Large commercial systems need 8-24 hour holds allowing tiny leaks to produce measurable pressure loss. Calculate allowable leakage using the formula: Q = 0.0002 × D × √P, where Q is leakage in gallons per hour, D is pipe diameter in inches, and P is test pressure in psi. A 4-inch pipe at 150 psi allows 0.01 gallons per hour—barely detectable over short test periods. During the hold, record pressure readings at 15-minute intervals initially, then hourly for extended tests. Pressure loss exceeding allowable rates requires leak location and repair.

Step 7: Visual inspection during the hold period locates leaks through dripping water, moisture stains, or audible hissing at connection points. Systematically inspect every joint, valve, fitting, and welded seam within the test section. Threaded connections show leaks at the thread interface, soldered joints leak from incomplete solder penetration or contaminated surfaces, and mechanical joints leak from improper gasket compression or misaligned components. Mark identified leaks with tape or spray paint for repair crews, and photograph leak locations for documentation. When repairs are needed, depressurize completely before loosening any connections to prevent high-pressure water jets that cause injuries and property damage.

The American Water Works Association (AWWA) reports that systematic hydrostatic pressure testing identifies 94% of installation defects before systems enter service, preventing water loss averaging 3,500 gallons annually per undetected leak in municipal systems.

What Are the Step-by-Step Procedures for Industrial Piping Systems?

Industrial piping system pressure testing follows ASME B31.3 code requirements through nine comprehensive steps: conduct pre-test hazard analysis and stored energy calculations, develop a written test plan specifying pressures and durations, establish safety zones and install warning barriers, verify isolation completeness and install relief protection, fill and vent the system thoroughly, pressurize gradually in stages with hold periods, conduct the full-duration test at design pressure, perform systematic leak inspection with documentation, and complete post-test depressurization and system restoration.

Step 1: Hazard analysis quantifies stored energy using the formula E = (P × V) / (2 × 144), where E is energy in foot-pounds, P is pressure in psig, and V is volume in cubic inches. Systems exceeding 100,000 foot-pounds require enhanced safety protocols including formal job hazard analysis, written test procedures, and trained test supervisors. The analysis identifies potential failure modes, determines safe standoff distances, and establishes emergency response procedures. This preliminary analysis often reveals whether hydrostatic testing is feasible or if pneumatic testing becomes necessary despite higher risks.

Step 2: Written test procedures document every aspect of testing including system boundaries, test media selection, target pressures, pressurization rates, hold duration, acceptance criteria, personnel responsibilities, and emergency procedures. The test plan must receive approval from the designated authority (typically the project engineer or plant safety officer) before testing begins. This document serves as the procedure manual during testing and becomes permanent quality assurance evidence of proper installation verification. Include piping and instrumentation diagrams (P&IDs) marked to show test boundaries, isolation points, vent locations, and monitoring positions.

Step 3: Safety zone establishment creates physical barriers preventing personnel access during testing. Calculate exclusion zone radius using the formula R = K × (V × P)^(1/3), where R is radius in feet, V is volume in cubic feet, P is pressure in psi, and K is a safety factor (typically 2-4 depending on confidence in piping integrity). Post warning signs at all approach paths stating “DANGER – PRESSURE TEST IN PROGRESS – AUTHORIZED PERSONNEL ONLY.” Assign safety monitors who maintain zone integrity throughout testing, preventing inadvertent entry by personnel unfamiliar with the hazard. Communication systems including two-way radios enable coordination between monitoring positions when testing large installations with multiple observation points.

Step 4: Isolation verification confirms complete separation between test section and adjoining systems that could suffer damage or allow pressure escape. Double block and bleed configurations provide highest isolation reliability—two closed valves with an opened vent between them ensures positive pressure relief if either valve leaks. Alternatively, remove spool pieces and install blank flanges at boundaries, providing visible confirmation of complete isolation. Install pressure relief assemblies sized to safely vent the test volume if overpressure occurs, with discharge piping routed to safe locations away from personnel and equipment.

Step 5: System filling differs significantly between hydrostatic and pneumatic methods. Hydrostatic tests require completely filling the piping with water, opening high-point vents systematically as liquid level rises. Large systems may need days to fill completely, and venting thoroughness directly affects test validity—even 1% trapped air in a liquid-filled system significantly reduces leak detection sensitivity. Pneumatic tests skip filling, instead introducing gas slowly from supply points positioned to promote even pressure distribution. Never pressurize rapidly with gas, as adiabatic compression generates heat that can damage plastic-lined piping or melt polymer gaskets.

Step 6: Staged pressurization proceeds according to ASME code requirements that mandate initial pressurization to 50% test pressure, hold for 10 minutes while inspecting for gross leaks, then increase gradually to full test pressure in 10% increments. Each stage includes a brief hold period allowing stress distribution throughout the piping network and providing opportunity to detect problems before reaching maximum stored energy. Monitor pressurization rate carefully—pneumatic tests should not exceed 50 psi per minute to prevent shock loading of supports and thermal effects from compression heating.

Step 7: The test hold period lasts a minimum of 10 minutes at full test pressure per ASME B31.3, though many installations require longer durations based on system complexity and leak rate acceptance criteria. During the hold, at least one qualified observer must continuously monitor pressure readings and visually inspect accessible piping for leaks. Large systems require multiple observers positioned strategically to observe all sections. Record pressure and temperature readings at one-minute intervals for the first 10 minutes, then at five-minute intervals thereafter. Any pressure loss beyond that attributable to temperature changes or material relaxation indicates leakage requiring investigation.

Step 8: Systematic inspection during and after the hold period locates any leaks through visual evidence. For hydrostatic tests, look for dripping water, moisture stains on insulation, or water accumulation below joints. Pneumatic tests rely on soap solution applied to all connections, with particular attention to threaded joints, welded seams, and mechanical compression fittings. Large leaks produce audible hissing detectable without soap solution. Ultrasonic leak detectors identify turbulent gas flow at frequencies above human hearing range, proving especially valuable for locating leaks in noisy industrial environments. Document all leak locations with photographs showing context and close-up views of the defect.

Step 9: Depressurization must proceed slowly for pneumatic tests to prevent thermal effects from rapid gas expansion and to avoid pressure transients that could damage piping or instrumentation. Vent through properly sized connections at controlled rates not exceeding 50 psi per minute. Hydrostatic tests drain through designated drain points, collecting test water for proper disposal according to environmental regulations. Verify complete depressurization using pressure gauges before approaching the test section or breaking any connections. Remove all test equipment, reinstall any components removed for testing, and complete restoration to operational configuration. Final documentation includes test data sheets with all pressure readings, temperature conditions, leak locations, repairs made, and final pass/fail determination signed by responsible personnel.

According to a 2019 study by the American Society of Mechanical Engineers, industrial piping systems undergoing systematic pressure testing per ASME B31.3 protocols experience 87% fewer in-service failures during the first five operational years compared to systems with abbreviated or improperly conducted testing procedures.

How Do You Perform a Leak Check After Installation?

You perform a leak check after installation by systematically applying detection methods to locate specific leak points after pressure testing indicates pressure loss, using bubble solutions for visual identification, electronic sensors for trace gas detection, or ultrasonic devices for acoustic leak sensing. The leak check serves as a complementary procedure to pressure testing—the quantitative pressure test reveals whether leaks exist somewhere in the system, while the qualitative leak check pinpoints exactly where those leaks occur, enabling targeted repairs rather than wholesale re-work. Effective leak checking requires methodical coverage of all potential leak sites including threaded connections, brazed or soldered joints, mechanical fittings, valve stems, gasket surfaces, and welds, with the detection method selected based on test media, leak size, and access limitations.

Specifically, the distinction between pressure testing and leak checking prevents confusion about their respective roles in installation verification. Pressure testing measures system tightness as a whole, determining if the installation meets acceptance criteria through quantitative pressure decay rates or allowable leakage volumes. This test answers the question “Is this system tight enough?” with a binary pass/fail result. Leak checking, conversely, identifies the physical location of individual leaks once pressure testing reveals their presence. This diagnostic process answers “Where are the leaks?” enabling repair teams to address specific defects efficiently.

The timing relationship between pressure testing and leak checking depends on installation type and test results. Small residential installations often combine both procedures simultaneously—technicians pressurize the system then immediately begin leak checking without waiting for extended hold periods, as any detected leaks receive immediate repair. Large commercial and industrial installations separate the procedures sequentially—conduct pressure testing first with extended hold periods to quantify leak rates, then perform targeted leak checking only if pressure loss exceeds acceptance criteria. This approach conserves time on leak-free installations while providing systematic troubleshooting when problems exist.

What Is the Bubble Test Method for Finding Leaks?

The bubble test method for finding leaks applies soapy solution to pressurized connections and observes bubble formation indicating gas escape, providing simple, inexpensive visual leak detection suitable for all connection types accessible to direct application. Commercial leak detection solutions like Rectorseal’s Leak Seeker or Big Blu offer optimized bubble stability and visibility, though simple dish soap mixed 10:1 with water provides adequate performance for most applications.

Preparing effective bubble solution requires attention to mixture ratio and application method. Commercial solutions arrive ready-to-use in squeeze bottles or spray applicators, formulated to produce abundant, long-lasting bubbles at leak sites while not corroding metal surfaces or attacking plastic components. DIY solutions mix one part liquid dish soap with ten parts water, creating thin solution that spreads easily without excessive foaming during application. Avoid using detergents with lotions, degreasers, or antibacterial additives that reduce bubble formation. Distilled water improves solution performance compared to tap water by eliminating minerals that inhibit bubbling.

Application technique determines detection effectiveness. Apply solution generously to completely wet the connection surface and 1-2 inches of adjacent pipe on both sides of the joint. On threaded connections, work solution into thread valleys where gases most commonly escape. Overhead connections require spray applicators or brush application to get adequate coverage without excessive drip-off. Wait 5-10 seconds after application before judging results—tiny leaks produce small bubbles slowly, and premature judgment misses subtle indications. Active leaks produce growing bubble streams within seconds, resembling champagne bubbles emerging continuously from the same location.

Interpreting bubble test results requires understanding bubble characteristics. Large rapid bubbles indicate significant leaks requiring immediate attention. Small bubbles forming slowly suggest minor leaks that may still exceed acceptance criteria for the system type. No bubble formation confirms tightness at that location, allowing the technician to move systematically to the next connection. False indications occasionally occur from soap residue or pipe surface irregularities that trap air bubbles—verify suspected leaks by wiping the area clean and reapplying solution for confirmation.

Environmental conditions affect bubble test reliability. Cold temperatures increase solution viscosity and reduce bubble formation rates, making tiny leaks harder to detect. Solutions freeze below 32°F, preventing winter use without heated workspace. Hot conditions cause rapid evaporation before bubbles can form, requiring repeated application or wet rags placed over connections to maintain moisture. Wind outdoors disrupts bubble formation and makes observation difficult, necessitating portable windscreens around test areas.

Systematic coverage prevents missing leaks through haphazard inspection. Start at one end of the test section and work methodically toward the other end, checking every connection in sequence. Create a checklist documenting all joints inspected, marking each as checked regardless of whether leaks are found. This approach ensures complete coverage rather than randomly checking obvious locations while missing others. Photography documents both leak locations and leak-free areas, providing quality assurance evidence.

Certain connection types require special attention during bubble testing. Valve stem packing leaks show bubbles at the stem where it exits the bonnet, indicating worn packing that requires adjustment or replacement. Threaded connections leak most commonly at the first few thread turns closest to the fitting body where sealing compound coverage may be inadequate. Brazed or soldered joints leak through porosity in the filler metal or areas where brazing rod didn’t flow completely into the joint gap. Flared connections leak when the flare nose doesn’t seat completely against the fitting cone, often from debris, damaged flares, or insufficient tightening torque.

What Are Electronic Leak Detection Methods?

Electronic leak detection methods use sensors that detect specific gases or acoustic signatures from escaping fluids, offering higher sensitivity than visual methods and enabling leak location in inaccessible areas where direct observation proves impossible. The four primary electronic detection technologies include heated diode refrigerant detectors for HVAC applications, ultrasonic sensors for detecting turbulent gas flow sounds, oxygen depletion monitors for nitrogen testing verification, and tracer gas analyzers for ultra-sensitive industrial applications.

Heated diode refrigerant detectors operate by passing sampled air over a heated ceramic element coated with alkali metal salts. When halogenated refrigerant molecules contact the hot surface, they decompose and release halogen ions that alter electrical conductivity between electrodes. This conductivity change generates signals proportional to refrigerant concentration, triggering audible and visual alarms when thresholds are exceeded. Modern detectors sense refrigerants at concentrations as low as 0.1 ounces per year—roughly equivalent to one drop every five minutes—making them invaluable for locating tiny leaks that would take hours to reveal through pressure decay alone.

Operating refrigerant detectors effectively requires proper technique. Hold the sensor probe approximately 1 inch from the surface being checked, moving slowly at 1-2 inches per second. Faster movement causes the sensor to sample insufficient gas volume, reducing detection probability. Concentrate on connection points and areas below joints where heavier-than-air refrigerants accumulate. Reset the detector in clean air every 10-15 seconds by holding it away from the piping, allowing the sensor to re-establish baseline before continuing. Sensitivity adjustment features enable coarse scanning at lower sensitivity to find general leak areas, then fine scanning at maximum sensitivity to pinpoint exact sources.

Ultrasonic leak detectors identify leaks through acoustic signatures rather than chemical sensing. When pressurized gas escapes through small orifices, turbulent flow generates ultrasonic sound waves at 20-100 kHz frequencies above human hearing range. The detector’s microphone captures these frequencies, processing them through heterodyne circuits that shift them into audible range for headphone output while simultaneously displaying signal strength on LED bar graphs or numeric intensity readings. This technology works with any gas—nitrogen, compressed air, steam, refrigerant—making it versatile across applications.

Ultrasonic detection excels in noisy industrial environments where ambient sounds mask the hissing noises from leaks. The detector’s narrow frequency selectivity rejects low-frequency machinery noise, motor sounds, and human voices while responding only to ultrasonic leak signatures. Directional parabolic dishes accessorize some models, providing focused sensitivity that locates leaks from 30-40 feet away in large facilities where close approach is difficult. However, ultrasonic methods have limitations—they require relatively large leaks producing turbulent flow (generally above 1 CFH for reliable detection), and heavy insulation blocks ultrasonic transmission, preventing detection of leaks within insulated piping.

Oxygen depletion sensors for nitrogen testing use electrochemical cells that generate current proportional to oxygen partial pressure in sampled gas. Normal air contains 20.9% oxygen by volume, and when nitrogen displaces air in a leaking system, oxygen concentration drops measurably. Wrapping plastic sheeting around suspected leak areas creates sampling chambers, and inserting the oxygen sensor probe through a hole in the plastic monitors oxygen levels. Concentrations below 19.5% indicate nitrogen presence confirming leakage, with lower readings indicating larger leaks. This method works only for nitrogen or inert gas testing and cannot be used with refrigerants or other gases that don’t displace oxygen.

Tracer gas analyzers represent the pinnacle of leak detection sensitivity for critical applications requiring extreme tightness verification. Helium mass spectrometers detect helium atoms at concentrations below one part per million, enabling leak detection down to 10^-9 standard cubic centimeters per second—100,000 times more sensitive than pressure decay methods. The system evacuates a test chamber containing the component, uses a mass spectrometer to continuously analyze the vacuum for helium, and registers increased helium concentration when the tracer gas leaks from the pressurized internal volume. Hydrogen sensors offer similar sensitivity at lower cost, detecting hydrogen by thermal conductivity or electrochemical reaction.

These advanced methods find application in aerospace, semiconductor manufacturing, medical device production, and nuclear power where leak rates must approach zero. However, the complexity and cost of tracer gas systems limit their use in routine construction—helium costs $50-$150 per cylinder, specialized sensors cost thousands of dollars, and trained operators are required for reliable results. Most HVAC, plumbing, and industrial piping applications achieve adequate quality assurance with simpler pressure decay testing and bubble solution verification.

How Do You Systematically Check All Potential Leak Points?

You systematically check all potential leak points by creating a comprehensive inspection checklist from system drawings, prioritizing high-risk connection types, working methodically through the system following a consistent pattern, documenting both leak findings and verified-tight areas, and cross-referencing inspection results against piping and instrumentation diagrams to ensure complete coverage. The systematic approach prevents the common mistakes of randomly checking obvious locations while overlooking others, repeatedly checking the same areas, or losing track of which connections have been inspected in complex installations with hundreds of joints.

Creating the inspection checklist begins during project planning phase by reviewing construction drawings and identifying all field-fabricated joints. Pre-fabricated assemblies from manufacturers rarely leak if properly handled during installation, whereas field joints created onsite through threading, welding, brazing, soldering, or mechanical connections represent the highest failure risk. The checklist should enumerate joints by type and location: “Threaded union, cold water main at column B-3,” “Brazed elbow, suction line at evaporator,” “Flanged connection, discharge piping at pump.” Number each joint sequentially to enable tracking inspection progress, with check-boxes indicating inspection completion and leak status.

Priority sequencing addresses high-risk areas first before investing time on lower-risk connections. Overhead joints take priority because leaks there cause damage to areas below and prove most difficult to access for repairs after system installation completes. Joints concealed by finished walls or ceilings require complete verification before closeout since future access requires destructive investigations. Large diameter connections and high-pressure areas merit early attention because leaks there result in significant fluid loss and potential safety hazards. Newly trained personnel’s work should be inspected more thoroughly than experienced craftworkers until quality confidence is established.

Methodical coverage patterns ensure no connections are overlooked in complex installations. One effective approach: start at the system entrance point, follow the main distribution line checking every joint in sequence, then inspect each branch line completely before returning to the main and continuing to the next branch. This “tree branch” pattern mirrors the piping layout and provides intuitive navigation. Another approach: divide the installation into zones or rooms, completing all inspections within each zone before moving to the next. The specific pattern matters less than following it consistently and documenting progress.

Tagging identified leaks enables repair crews to locate problems efficiently without repeating entire inspections. Use colored tape or tags that withstand wetting from soap solution and pressure test procedures. Develop a consistent color code: red for active leaks requiring immediate repair, yellow for questionable areas needing re-inspection, green for verified-tight locations completed. Write leak identifiers on tags matching checklist numbers, enabling cross-reference between field observations and documentation. Photograph each leak with identifying tags visible, capturing both wide angle context shots showing leak location within the overall system and close-ups revealing specific joint geometry.

Electronic documentation tools increasingly replace paper checklists for large installations. Tablet computers running specialized inspection software enable technicians to pull up system drawings, zoom to specific areas, tap connections to mark inspection status, attach photos directly to joints on the drawing, and synchronize data with project management systems in real-time. These tools generate completion reports automatically, track inspection productivity metrics, and provide searchable databases for future maintenance reference. However, simple paper checklists and P&ID markups still serve effectively for small residential and light commercial projects where software overhead exceeds benefits.

Common leak points requiring careful examination include threaded connections where insufficient thread sealant or damaged threads compromise sealing, brazed joints with insufficient heat or improper filler metal selection, flared fittings with debris on sealing surfaces, compression fittings with uneven ferrule compression, welded joints with porosity or incomplete penetration, and mechanical couplings with damaged gaskets or insufficient bolt torque. Valve stems leak through packing when adjustable screws are too loose or packing material has degraded. Pressure relief valves sometimes leak from seats that sealed improperly during initial testing or debris contamination. Quick-connect fittings leak when O-rings are damaged during assembly or when connections aren’t fully engaged.

According to research published by the American Society of Heating, Refrigerating and Air-Conditioning Engineers (ASHRAE), systematic leak checking protocols reduce undetected installation leaks by 73% compared to informal visual inspections, demonstrating that structured approaches yield substantially better quality outcomes.

What Are the Acceptable Pressure Levels and Test Durations?

Acceptable pressure levels and test durations vary by system type, governing code requirements, and pipe material properties, with HVAC systems typically testing at 200-600 psi nitrogen for 30 minutes to 48 hours, plumbing systems testing at 150 psi water pressure or 1.5 times working pressure for 2-24 hours, and industrial piping testing at 1.5 times design pressure (hydrostatic) or 1.1 times design pressure (pneumatic) for minimum 10-minute hold periods. These specifications balance adequate stress testing to reveal installation defects against safety concerns from over-pressurization and the practical constraints of testing duration on project schedules. Code requirements establish minimum standards, but manufacturers’ specifications may impose stricter limits that take precedence, and temperature compensation calculations must adjust measured pressure readings when environmental conditions change during extended test periods.

However, understanding the rationale behind these requirements helps installers make informed decisions when situations deviate from standard conditions. Test pressure selection follows engineering principles that stress systems beyond normal operating conditions without approaching material failure limits. The safety factor—typically 1.5 for hydrostatic and 1.1 for pneumatic tests—provides margin that reveals defects under test stress that would eventually fail under operational cycling, thermal expansion, water hammer, or other service conditions. Lower test pressures fail to adequately stress weak joints, while excessive pressures risk damaging properly-installed components or rupturing piping, creating safety hazards during testing itself.

Test duration selection balances leak detection sensitivity against practical schedule impacts. Short tests detect only large leaks that produce rapid, obvious pressure loss. Extended tests allow tiny leaks to accumulate measurable pressure loss over time, revealing defects that would cause slow refrigerant depletion, water waste, or process efficiency losses over operational life. The relationship follows inverse proportionality: doubling test duration approximately halves the detectable leak rate. However, diminishing returns occur beyond certain thresholds—48-hour tests provide little additional defect detection compared to 24-hour tests for most applications, and costs from delayed commissioning often outweigh marginal quality benefits from extended testing.

What Are HVAC System Test Pressure Requirements?

HVAC system test pressure requirements specify 200-400 psi nitrogen for residential split systems held 30-60 minutes, 400-600 psi nitrogen for commercial equipment held 4-24 hours, and manufacturer-specified pressures for specialized applications like ammonia refrigeration or high-pressure CO2 systems that may require 300-800 psi testing. These pressures provide adequate stress testing without exceeding component pressure ratings, with hold durations scaled to system volume and acceptable leak rates.

Residential HVAC systems including split air conditioners, heat pumps, and small packaged units typically undergo pressure testing at 250-350 psi using dry nitrogen. This pressure range comfortably exceeds normal operating pressures (100-300 psi on the low side, 250-450 psi on the high side depending on refrigerant type) while remaining within the safe working pressure of copper tubing and standard components. Most residential equipment nameplates list maximum allowable working pressure around 500-600 psi, so test pressures in the 250-350 psi range provide adequate safety factor. The 30-60 minute hold duration suffices to detect leaks exceeding 0.5 psi per hour—the threshold where refrigerant losses become economically significant and environmental reporting may be required.

The test procedure for residential systems follows the pressurization sequence described previously: purge with nitrogen, remove valve cores, connect test equipment, pressurize gradually to 250 psi observing for major leaks, continue to full test pressure (typically 300-350 psi), isolate and begin hold period, monitor with temperature compensation, and conduct soap solution leak checks if pressure loss occurs. Some installations add a small amount of refrigerant (5-10% by weight) to the nitrogen charge, enabling electronic leak detector use while maintaining nitrogen purge benefits. This “trace gas” approach combines nitrogen’s moisture-elimination capability with refrigerant’s detectability, though it increases costs and environmental impacts compared to pure nitrogen testing.

Commercial HVAC systems including rooftop units, chillers, and built-up air handlers require higher test pressures and longer durations reflecting their larger refrigerant charges, higher operating pressures, and greater consequences from leakage. Test pressures of 400-500 psi are common, with hold periods extending 8-24 hours to detect tiny leaks that would cause significant refrigerant loss over time. A 10-ton rooftop unit contains 25-35 pounds of refrigerant valued at $1,500-$4,000 depending on type, so even small leaks produce substantial operating costs and environmental impacts. The extended test duration allows leak rates below 0.1 psi per hour to produce measurable pressure loss, corresponding to refrigerant losses under 0.5 pounds per year—the threshold for mandatory leak reporting in many jurisdictions.

Industrial refrigeration systems operating with ammonia or other non-fluorinated refrigerants follow different testing protocols reflecting ammonia’s toxic properties and higher operating pressures. Ammonia systems typically test at 300 psi minimum, held for 24-48 hours with additional requirements for certified pressure recorders documenting continuous pressure throughout the test. Some installations require sequential testing: initial pressure test with nitrogen to detect gross leaks, followed by ammonia charging and operational leak checking using litmus paper or sulfur candles that react visibly with ammonia, providing highly sensitive leak detection. CO2 cascade systems and transcritical CO2 refrigeration require specialized testing at 800-1,200 psi reflecting operating pressures of 600-1,400 psi in the high side, with test procedures developed specifically for these systems’ unique characteristics.

Manufacturer specifications always take precedence over general guidelines, and installers must verify requirements in equipment installation manuals before testing. Some manufacturers limit test pressures to 350-400 psi maximum to protect proprietary components not rated for higher pressures. Others mandate specific nitrogen purity standards (99.9% minimum) or prohibit valve core removal that could contaminate service ports. Warranty protection depends on following these specifications exactly, and deviations risk voiding coverage even when defects are unrelated to testing procedures.

What Are Plumbing and Water System Test Pressure Requirements?

Plumbing and water system test pressure requirements specify testing at 150 psi or 1.5 times the maximum working pressure (whichever is greater) for 2-hour minimum hold periods in residential applications, with pressure reduced to 100 psi near valves to protect valve seats, and commercial installations requiring extended 8-24 hour tests with calculated allowable leakage rates based on pipe diameter and system length. These requirements balance adequate stress testing against practical material limitations and safety considerations.

The International Plumbing Code (IPC) Section 312.4 establishes baseline requirements that most jurisdictions adopt: “The water supply system shall be tested and proved tight under a water pressure not less than the maximum working pressure of the system or not less than 150 psi, whichever is greater, for a duration of not less than 15 minutes.” However, most professional installers and many local amendments extend this minimum to 2 hours, recognizing that 15-minute tests detect only major leaks while missing smaller defects that cause significant water waste over time. The 150 psi test pressure provides adequate margin above typical working pressures of 50-80 psi in residential systems, stressing joints to approximately double operational stress.

Commercial and municipal water systems follow more stringent requirements under AWWA C600 “Installation of Ductile-Iron Water Mains and Their Appurtenances” and similar standards. These specifications mandate testing at 1.5 times working pressure or 150 psi minimum, held for 2-24 hours depending on pipe diameter and system volume. Allowable leakage calculations follow the formula: Q = (S × D × √P) / 7,400, where Q is allowable leakage in gallons per hour, S is system length in feet, D is nominal pipe diameter in inches, and P is test pressure in psig. For example, 1,000 feet of 6-inch pipe tested at 150 psi allows: Q = (1,000 × 6 × √150) / 7,400 = 0.99 gallons per hour maximum leakage.

Test pressure reduction near valves prevents damage to valve components not designed for full test pressure. Gate valves, ball valves, and check valves contain seats and seals optimized for working pressure, and exposing them to 150 psi may compress seals permanently, causing leakage during subsequent operation. When test sections include valves within the test boundary, reduce test pressure to 100 psi—still adequate to stress piping joints while protecting valve integrity. Alternatively, design test sections ending at valve boundaries with the valves providing isolation, removing them from the test pressure zone entirely.

Pneumatic testing of plumbing systems using compressed air instead of water addresses situations where hydrostatic testing is impractical: installations in freezing weather before building heat operates, systems with sensitive backflow preventers or pressure-reducing valves that could suffer water damage, or structures with inadequate floor loading capacity to support water-filled piping weight. Pneumatic plumbing tests typically use 50-60 psi air pressure—lower than hydrostatic tests recognizing increased safety hazards from compressed gas. The IPC permits pneumatic testing but requires pressure not exceeding working pressure, making pneumatic tests less stressful than hydrostatic alternatives and potentially missing marginal defects.

Natural gas piping requires testing distinct from water piping reflecting the explosion hazards from leaking fuel gas. The International Fuel Gas Code (IFGC) Section 406 mandates testing at 1.5 times working pressure or 3 psi minimum (whichever is greater) for low-pressure systems under 0.5 psi, and 1.5 times working pressure for high-pressure systems. Gas piping tests typically use air or nitrogen, held for 10-60 minutes while conducting thorough soap solution leak checks on all connections. Some jurisdictions require locked pressure gauges that remain in place 24-48 hours before inspectors verify pressure has not dropped, though the test can proceed sooner if immediate inspector availability occurs.

radiator replacement projects in older buildings may encounter unique testing challenges when new radiators connect to existing piping. The old piping may not withstand modern test pressures due to corrosion, mineral deposits, or material degradation. In these cases, test only the new work section isolated from old piping, then verify the connection points through extended operational observation after system commissioning. Consider replacing deteriorated piping rather than risking entire system contamination from rust and deposits. Modern OEM radiators generally handle full test pressures without issues, while aftermarket units may have lower pressure ratings requiring verification before testing. Electric fan and shroud transfer considerations become relevant when replacing vehicle cooling systems, though these are not applicable to building plumbing installations.

What Are Industrial Piping Test Pressure Requirements?

Industrial piping test pressure requirements follow ASME B31.3 “Process Piping” code specifications requiring hydrostatic testing at 1.5 times design pressure or pneumatic testing at 1.1 times design pressure, held for a minimum 10-minute duration once reaching test pressure, with extended hold times for large-volume systems and documentation of all pressure readings, temperature conditions, and observed system behavior throughout the test. These requirements ensure adequate safety margins while recognizing the dangerous stored energy levels in pneumatic testing that justify lower test pressure multipliers compared to hydrostatic methods.

ASME B31.3 Section 345 provides comprehensive testing requirements that form the foundation for industrial piping verification. The code mandates “After assembly, piping systems shall be tested to ensure tightness. Tightness tests shall be hydrostatic pressure tests using water except where there is a possibility of damage due to freezing, where water will damage the system or its contents, or where the system cannot be readily dried and is to be used in a service where traces of water would be detrimental.” This guidance establishes a clear preference hierarchy: use hydrostatic testing unless specific circumstances make it impractical, in which case pneumatic testing becomes acceptable despite increased hazards.

Hydrostatic test pressure calculations follow the formula: Test Pressure = 1.5 × Design Pressure, where design pressure appears on the piping specification documents and represents the maximum allowable working pressure (MAWP) at design temperature. For example, a process piping system designed for 600 psig service requires hydrostatic testing at 900 psig minimum. The 1.5 safety factor provides adequate margin to reveal installation defects and material weaknesses without approaching the piping’s ultimate burst pressure, which typically exceeds 4-5 times design pressure for properly selected Schedule 40 carbon steel pipe in typical process services.

Temperature effects require consideration during pressure calculations. Design pressure is rated at specific design temperature (often 100-400°F in process services), but hydrostatic tests typically occur at ambient temperature during construction. Metal strength increases at lower temperatures, providing additional safety margin. However, cold temperature also reduces metal ductility, making some materials more brittle and subject to sudden failure rather than gradual yielding. Pressure ratings must account for test temperature, and cold service applications (below -20°F) may require impact testing of materials to verify adequate toughness.

The 10-minute minimum hold period begins after reaching test pressure and stabilizing any pressure variations from thermal effects or system expansion. ASME B31.3 specifies “The test pressure shall be maintained for at least 10 minutes and then reduced to design pressure and maintained at design pressure long enough for examination of all joints and connections.” This dual-phase approach stresses systems fully at test pressure, then reduces to operating pressure for detailed visual inspection when leaks become more visible and accessible without requiring personnel to approach highly pressurized piping.