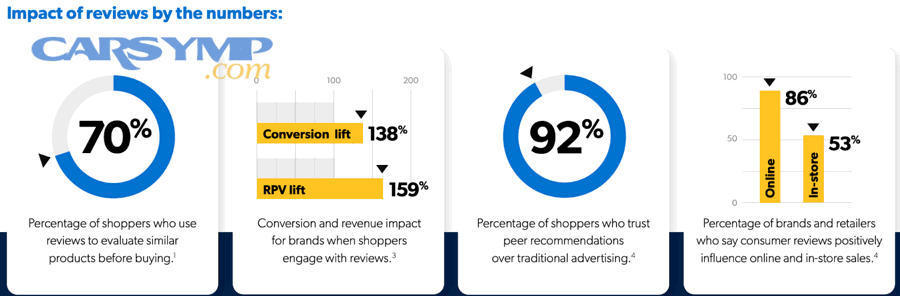

Comparing review score vs review volume is the fastest way to separate “looks good” from “proven good” when you’re choosing an auto repair shop, because the score signals satisfaction while the volume signals reliability under repetition.

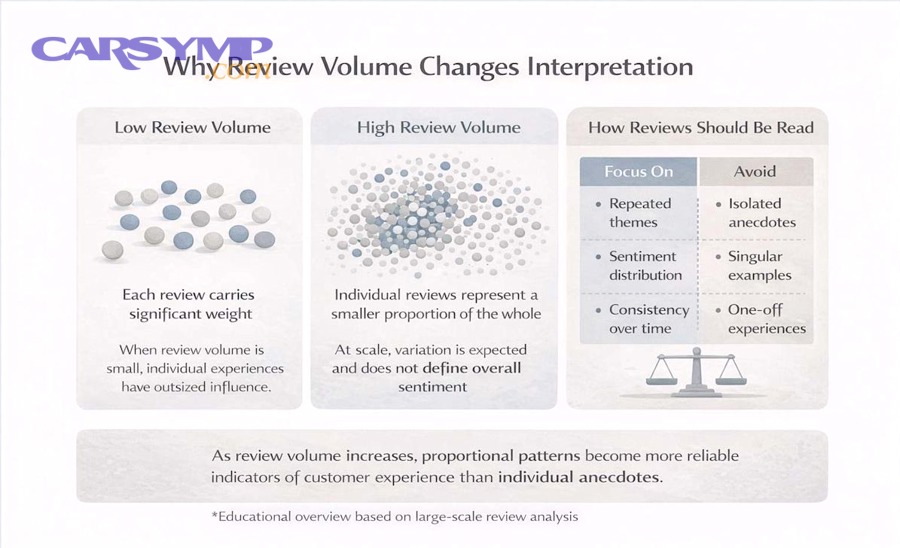

To make that comparison useful, you need to understand statistical confidence: a 4.9 average from 18 reviews can be less dependable than a 4.6 average from 480 reviews, depending on your repair type and risk tolerance.

You also need to read for patterns, not just stars—recency, job-type relevance, and consistency across reviewers often tell you more than a decimal point on a rating.

Tiếp theo, Giới thiệu ý mới: we’ll turn ratings and counts into a practical decision framework you can use in minutes, before you book, drop off keys, or agree to “one more recommended service.”

Is a higher review score always better than more reviews?

No—high score is persuasive, but higher volume often predicts repeatable quality; the best choice depends on your repair complexity, your tolerance for surprise costs, and whether you need consistent results rather than a perfect first impression.

However, to compare fairly, you must treat score as “average satisfaction” and volume as “evidence strength,” because an average without enough data can swing wildly with just a few new reviews.

Why review score can mislead when volume is small

A near-perfect score with low volume can happen for innocent reasons (new shop, recently moved listing, niche specialty), but it can also reflect selection bias: only the happiest customers bother to post, or early reviews come from friends and family.

In contrast, as the count grows, the average becomes harder to “game” and more likely to reflect the shop’s typical workflow, communication style, and how they handle problems.

To keep your comparison grounded, treat a score under ~30 total reviews as “unstable,” and use the written content to look for repeatability (same strengths, same complaints) rather than one-off praise.

Why review volume can mislead when score is mediocre

A large volume with a middling score can mean inconsistent quality—but it can also mean the shop is busy, handles difficult jobs, or serves a wider variety of vehicles where outcomes vary.

So instead of rejecting volume-heavy listings automatically, segment the reviews by repair type (diagnostics vs parts replacement vs maintenance) and by customer constraints (time pressure, older cars, warranty expectations).

That segmentation is the bridge: it turns “lots of reviews” into “lots of relevant reviews,” which is the real signal you want.

Theo nghiên cứu của Harvard Business School từ đơn vị nghiên cứu (Research) vào 2011, một mức tăng 1 sao trên Yelp có liên quan đến mức tăng khoảng 5–9% doanh thu cho nhà hàng độc lập—cho thấy điểm số có thể tác động mạnh đến hành vi lựa chọn của người tiêu dùng.

How many reviews are enough before the score becomes trustworthy?

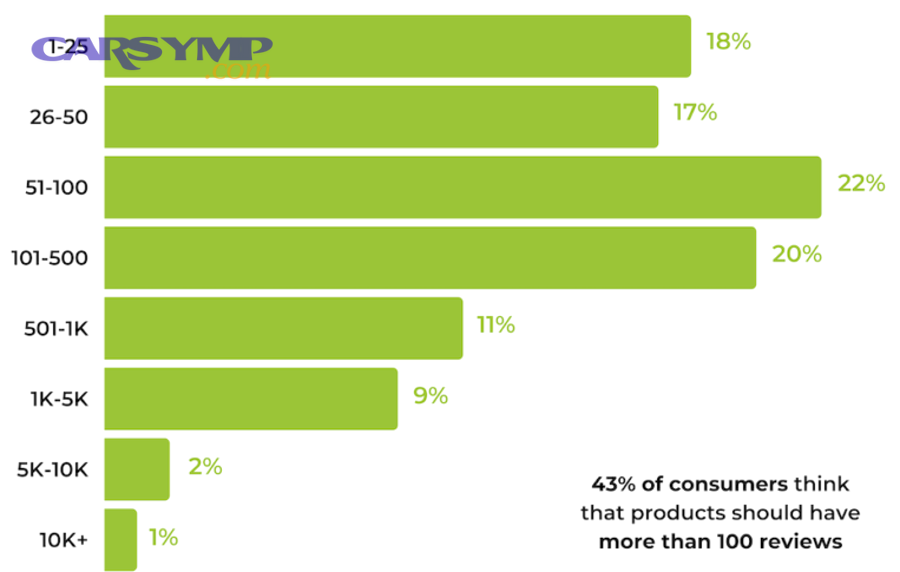

A review score becomes meaningfully trustworthy when the review volume is large enough that a handful of new ratings won’t change the average much—typically around 30–50 reviews for a quick screen, and 100+ reviews for confident decisions on bigger repairs.

Để bắt đầu, you can treat “enough reviews” as a confidence problem: the fewer the reviews, the wider the uncertainty around the displayed average, so you should demand stronger proof elsewhere (photos, detailed write-ups, consistent timelines).

A simple “confidence ladder” you can use in 60 seconds

Instead of chasing a single magic number, use a ladder: (1) under 20 reviews = treat as a lead, not a decision; (2) 20–49 = usable if review text is detailed and consistent; (3) 50–99 = generally stable for common services; (4) 100+ = strong evidence for consistent operations.

In other words, volume doesn’t replace reading—it tells you how much reading you need to do before you trust the score.

Below is a table that helps you translate review volume into how cautious you should be when interpreting the score.

This table contains practical thresholds and what they imply for risk when booking routine service versus complex diagnostics.

| Review volume | How stable the score is | Best use | What to double-check |

|---|---|---|---|

| 0–19 | Very unstable | Shortlist only | Recency, detail, reviewer credibility, photos |

| 20–49 | Somewhat stable | Routine maintenance | Patterns of communication, pricing clarity, repeat complaints |

| 50–99 | Stable for common jobs | Most repairs | Comebacks, warranty handling, “diagnosed wrong” mentions |

| 100+ | High confidence | Major repairs + long-term shop choice | Service-type relevance, changes in staff/ownership, recent drift |

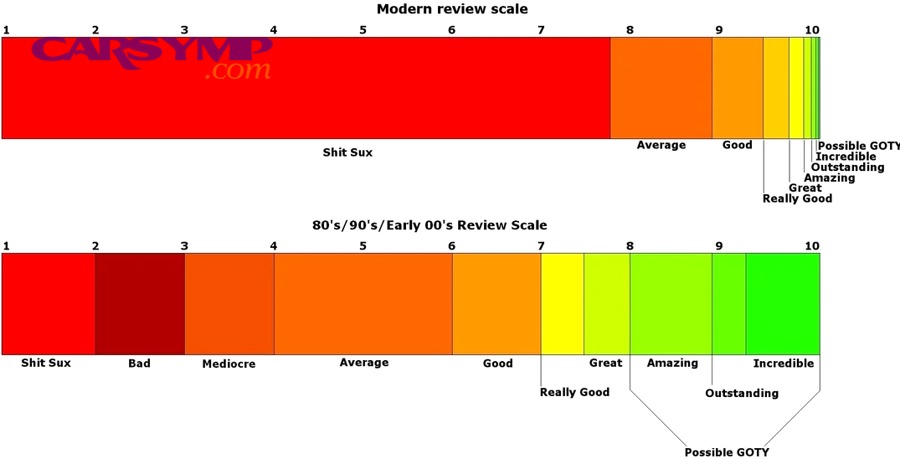

Why “perfect 5.0” can be a yellow flag

A perfect score across many reviews is possible, but in real service businesses, variance usually appears: a delayed part shipment, a miscommunication, a comeback, or a customer with unrealistic expectations.

So when you see a near-perfect score, shift your attention from the number to the distribution: look for balanced feedback, how the owner responds to negatives, and whether issues are resolved with receipts, refunds, or warranty fixes.

Theo nghiên cứu của Spiegel Research Center từ Northwestern University vào June 2017, xác suất mua tăng mạnh khi có đánh giá; chỉ với khoảng 5 reviews, purchase likelihood có thể cao hơn nhiều so với không có review—gợi ý rằng “presence + volume” tạo niềm tin rất sớm, trước khi bạn tối ưu theo điểm số.

When should you prefer a lower score with a large volume?

You should prefer a slightly lower score with high volume when you value repeatable processes—clear estimates, consistent turnaround, and predictable warranty handling—especially for complex or high-stakes repairs where one bad outcome is expensive.

Tuy nhiên, the key is to confirm that the lower score comes from solvable issues (wait times, pricing expectations) rather than technical failures (misdiagnosis, repeat breakdowns).

Complex diagnostics favor volume over perfection

Diagnostics are noisy: symptoms overlap, intermittent faults waste time, and customers remember the bill more than the logic of troubleshooting.

So a shop with a 4.5 and 600 reviews may be a better bet than a 4.9 and 40 reviews, because the larger sample often reflects experience across edge cases—electrical gremlins, multiple-failure situations, or “another shop already replaced the part” stories.

Time pressure favors volume plus operational consistency

If you need a next-day fix, consistent operations matter more than marginal score differences: intake process, parts sourcing, scheduling discipline, and communication habits.

High volume can indicate the shop has systems—service advisors, dispatch routines, and clear job notes—reducing the odds that your car becomes a “forgotten project” in the back lot.

Budget sensitivity favors “transparent 4.6” over “mysterious 4.9”

Some shops win high ratings by being friendly and fast, but leave pricing ambiguity until late; others run slightly lower due to strict policies, but keep estimates clean and approvals documented.

If you hate surprises, choose the shop whose reviews repeatedly mention itemized estimates, “called before doing anything,” and “explained options,” even if the score is a touch lower.

Theo nghiên cứu của Harvard Business School từ khoa/nhóm nghiên cứu liên quan vào Oct 2011, consumer ratings có thể ảnh hưởng đến hành vi lựa chọn và doanh thu; vì vậy, điểm số có giá trị, nhưng bạn vẫn cần volume để đánh giá tính ổn định của trải nghiệm qua thời gian.

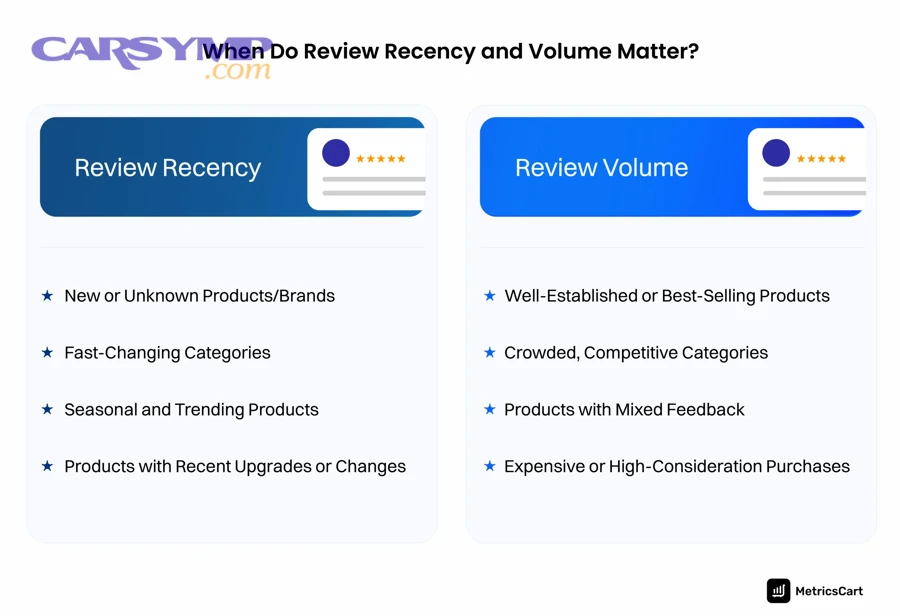

How do you adjust for recency and service-type relevance?

You adjust for recency and relevance by weighting recent reviews more heavily and filtering for reviews that match your job type, because a shop can change quickly—new manager, new techs, new policies—and “oil change praise” may not predict “engine diagnosis skill.”

Cụ thể, your goal is to compare like with like: score and volume only make sense when the underlying work is comparable to what you need.

Use a “last-90-days” lens before you trust the average

Start with the most recent 20–30 reviews and scan for operational drift: “new owner,” “front desk changed,” “pricing policy changed,” “different vibe,” or “not the same as before.”

Then compare that recent sentiment to the overall score: if the average is high but the last 90 days show repeated complaints, the historical score is lagging reality.

Filter by repair category: maintenance, parts replacement, diagnostics

Many shops are excellent at maintenance and straightforward replacements but weaker at root-cause diagnostics; others are the opposite.

So build a mini set: read 10–15 reviews that mention your system (brakes, A/C, suspension, charging system, cooling, transmission) and note whether outcomes are described as “fixed the first time” versus “kept coming back.”

Watch for reviewer constraints that match yours

If you commute daily, prioritize reviewers who also rely on the vehicle and mention timeline reliability; if you drive an older car, prioritize reviewers who mention cost-conscious options and honest “not worth it” advice.

That matching is the hook: it turns generic popularity into a prediction for your specific situation.

Theo nghiên cứu của Local Falcon từ bộ phận nghiên cứu dữ liệu (phân tích local search) vào Q4 2025, dữ liệu từ hàng chục triệu kết quả tìm kiếm cho thấy review signals và đặc biệt là recency có thể thay đổi đáng kể khả năng hiển thị—gợi ý rằng đọc review gần đây quan trọng không kém số lượng tổng.

What patterns suggest a rating is inflated or manipulated?

Yes, you can often detect inflated ratings by looking for unnatural patterns—review bursts, vague praise, repetitive wording, and reviewer profiles with thin history—then cross-checking with negative reviews and owner responses for realism.

Ngược lại, authentic patterns usually look messy: a mix of 3–5 stars, specific details, varied writing styles, and clear descriptions of what was fixed, how long it took, and what it cost.

Look for “burst + blur” behavior

A suspicious cluster often shows up as many reviews in a short time window with blurry detail: “Great service!” “Highly recommended!” “Best shop!” with no vehicle, no symptom, no service line item, and no timeline.

That doesn’t prove fraud, but it lowers the evidentiary value of the volume—because the volume is no longer independent observations.

Compare language variety and reviewer footprints

If many reviewers use near-identical phrases, or their profiles show only one or two reviews total, treat the rating as weaker evidence.

On the other hand, profiles that review many local businesses over years (restaurants, services, stores) typically carry more credibility, especially when their review style remains consistent across categories.

Use owner responses as a truth serum

Authentic businesses respond with specific resolution steps: “Please call, we want to recheck,” “We refunded labor,” “We replaced the part under warranty,” or “Here’s why the estimate changed.”

Overly generic responses—especially when repeated—can signal that the shop is managing optics rather than outcomes.

To connect this to your real decision, treat this section as a filter: it improves the quality of the data before you compare score vs volume. Inside your reading process, you’ll likely encounter auto repair reviews that mention suspicious incentives or social pressure; that’s exactly where “How to spot fake mechanic reviews” becomes practical rather than theoretical.

Theo nghiên cứu của Google từ chính sách Fake Engagement (cập nhật và thực thi rộng rãi trong 2024), nền tảng có thể áp dụng cảnh báo và biện pháp xử lý với hồ sơ có dấu hiệu thao túng đánh giá—gợi ý rằng bạn nên cảnh giác với các mẫu review bất thường thay vì chỉ nhìn điểm trung bình.

How can review text hint at warranty strength and comeback risk?

Review text can reveal warranty strength and comeback risk by showing whether issues were fixed the first time, how the shop handled returns, and whether they honored parts/labor commitments without blame-shifting.

Bên cạnh đó, you should look for operational clues: documentation, rechecks, and the language customers use when describing follow-up visits.

Spot “fixed-first-time” language versus “repeat visits” language

Strong shops generate reviews that sound like closure: “problem solved,” “no more noise,” “passed inspection,” “drove fine for months,” “AC cold again.”

High comeback risk shows up as loops: “back again,” “same issue returned,” “third time here,” “kept replacing parts,” “still not fixed.”

Those phrases are your early-warning system because they often surface even when the overall score looks fine.

Check how the shop treats responsibility when something goes wrong

Warranty strength isn’t just a written policy—it’s behavior under stress.

Look for stories where the shop rechecked without arguing, ate labor on a redo, credited a diagnostic fee, or corrected a mistake without turning it into a conflict.

Find price-change narratives and whether approvals were explicit

Comebacks are sometimes technical; sometimes they’re trust breakdowns caused by unclear approvals.

So note whether reviewers say, “They called before doing the extra work,” “sent photos,” “explained why,” or “got my OK in writing.” Those are process signals that reduce both financial and mechanical risk.

As you read, you’ll often see Warranty and comeback rate clues in reviews embedded in seemingly minor details—like whether the shop re-torqued a wheel after a brake job, rechecked a leak dye test, or did a free post-repair scan. Those details matter because they describe the shop’s quality control, not just friendliness.

Theo nghiên cứu của Cornell Center for Hospitality Research từ báo cáo ngành vào February 2017, phân tích nội dung review cho thấy text (nội dung mô tả) có thể bổ sung góc nhìn mà điểm số không phản ánh đầy đủ—gợi ý rằng bạn nên đọc “câu chuyện xử lý sự cố” để đánh giá rủi ro comeback.

Which platforms and signals matter most when choosing a local shop?

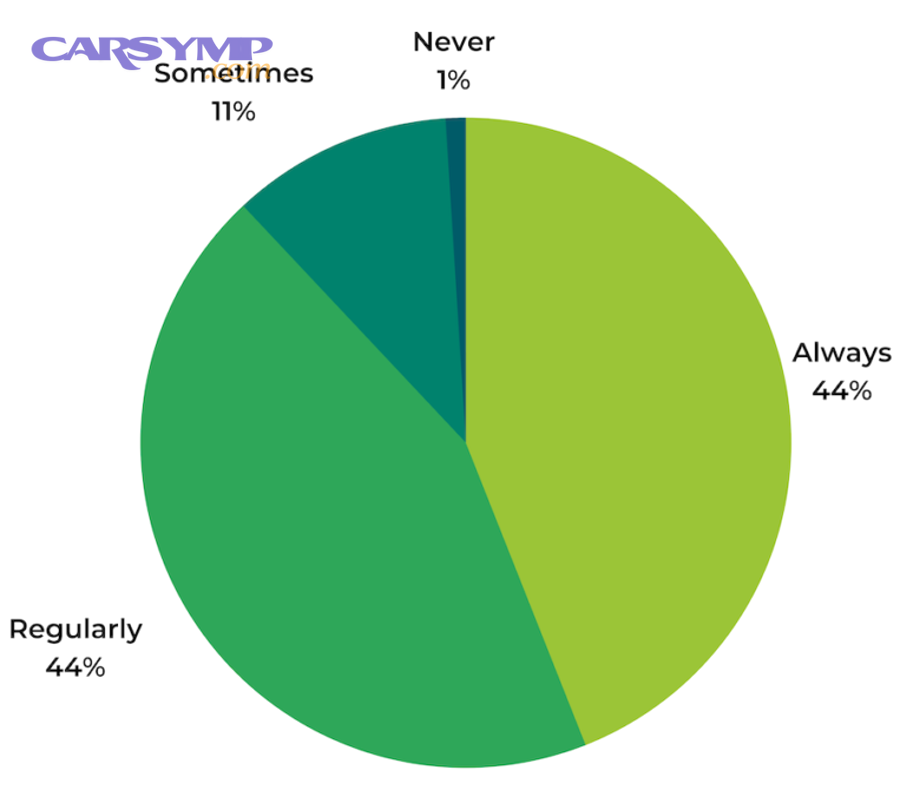

Google is usually the fastest platform for local discovery, while Yelp and niche platforms can add detail; the best approach is to compare consistency across platforms and prioritize the one that reflects your area’s real behavior.

Ngoài ra, platform signals are not equal: some emphasize recency, some emphasize reviewer credibility, and some amplify volume more than score.

Google: prominence, recency, and a broad customer mix

Google reviews tend to represent a wider slice of customers because many people already have accounts and leave quick feedback after service.

This makes Google volume a strong “market coverage” signal—but it also means you must read for specifics, because quick reviews can be thin on details.

Yelp: fewer reviewers, often more detailed narratives

In many cities, Yelp reviews are longer, more story-driven, and more critical, which can help you understand communication and pricing expectations.

But Yelp volume may be lower for auto repair in certain areas, so treat it as a qualitative supplement rather than your only data source.

Cross-platform consistency beats single-platform perfection

If a shop holds similar sentiment across Google, Yelp, and other listings, that consistency is powerful evidence that operations—not just optics—are solid.

If the story conflicts (great on one, troubling on another), your next move is to inspect recency and reviewer credibility to identify which platform is capturing the current reality.

Theo nghiên cứu của Google (hướng dẫn Local Ranking) từ Business Profile support, prominence chịu ảnh hưởng bởi uy tín và các tín hiệu như reviews; điều này gợi ý rằng review volume và độ tin cậy của shop có thể tác động đến khả năng bạn tìm thấy họ, không chỉ chất lượng thật sự.

What decision framework balances score and volume for your situation?

The best framework is to combine (1) a minimum volume threshold, (2) a score band you’re comfortable with, and (3) a relevance filter for your repair type, then confirm the decision by reading a small sample of recent negatives and how the shop responded.

Hãy cùng khám phá a step-by-step method that turns “too many reviews” into a clear yes/no choice without overthinking.

Step 1: Set your “minimum evidence” rule (volume)

Pick a minimum review count based on risk:

- Maintenance (oil, tires, brakes, battery): aim for 30–50+ reviews.

- Common repairs (alternator, starter, suspension, A/C service): aim for 50–100+ reviews.

- Hard diagnostics (electrical, intermittent, drivability): aim for 100+ reviews or a specialist with proven case stories.

This rule keeps you from being seduced by a high score that hasn’t been tested enough times.

Step 2: Use a score band, not a single cutoff

Instead of “must be 4.8+,” use a band like 4.4–4.8 and then compare the written evidence.

Why? Because different customer segments rate differently: some punish pricing, others punish delays, and some reward friendliness even when results are average.

Step 3: Read the newest 10 negatives first

Negative reviews are not just complaints—they’re stress tests that reveal policies and accountability.

Scan for themes: surprise charges, no-calls, misdiagnosis, repeated visits, or disrespectful communication. Then check whether the shop responds with specifics and fixes outcomes.

Step 4: Confirm with three “proof points” in the text

Before you book, look for at least three proof points:

- Process clarity: itemized estimate, approvals, photos, explanations.

- Outcome clarity: fixed-first-time language, time-on-road after repair.

- Accountability: reasonable warranty handling, rechecks, and corrections.

Once you find those, your score-vs-volume comparison becomes predictive rather than cosmetic.

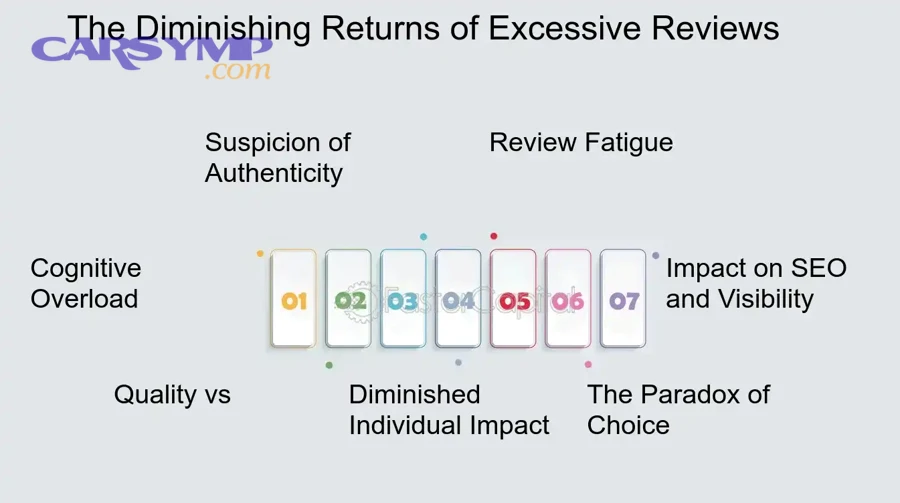

Theo nghiên cứu của Spiegel Research Center từ Northwestern University vào June 2017, lợi ích của review tăng mạnh ở giai đoạn đầu và có xu hướng giảm dần khi số review tiếp tục tăng—gợi ý rằng framework nên ưu tiên “đủ bằng chứng” trước, rồi mới tinh chỉnh theo điểm số.

Contextual border: Up to this point, we used review score and review volume to choose a shop; next, we’ll expand into advanced interpretation techniques that help you compare shops with different histories, different platforms, and different customer mixes.

Supplementary: Advanced ways to interpret score and volume beyond the average

You can improve your decision by applying Bayesian thinking, spotting outliers, and separating “signal” from “noise,” because two shops can display similar averages while hiding very different risk profiles.

Đặc biệt, these methods help when the data is messy—new listings, recent ownership changes, or polarized customer segments.

Bayesian average: a fairer comparison for low-volume shops

A Bayesian average pulls extreme scores toward a “typical baseline” until enough reviews accumulate, which prevents a 5.0 from 12 reviews from outranking a 4.7 from 300 reviews in your mind.

Practically, you can simulate this by asking: “If I added 30 average reviews, would this shop still look exceptional?” If not, treat it as promising but unproven.

Outlier analysis: separate one-off drama from systemic failure

One angry review isn’t automatically meaningful; a pattern is.

So look for repeated phrases across different reviewers: “won’t honor warranty,” “changed price after drop-off,” “didn’t fix it,” “kept my car for days without updates.” Repetition across time is a stronger signal than any single story.

Polarization check: identify shops that are great for some, terrible for others

A polarized shop can average out to “fine” while still being risky for you.

If you see many 5-star raves and many 1-star complaints, ask what divides customers: older vs newer cars, walk-ins vs appointments, warranty claims, or diagnostic disputes. Choose only if your profile matches the happy group.

Lexical opposites: translate “friendly” vs “competent” into your priorities

Reviews often trade in opposites: fast vs thorough, cheap vs reliable, friendly vs rigorous, flexible vs policy-driven.

These antonyms help you pick deliberately: for a major repair, you may prefer thorough over fast and rigorous over flexible—even if the emotional tone reads harsher.

Theo nghiên cứu của BrightLocal từ phân tích yếu tố local search (cập nhật Dec 18, 2025), prominence chịu ảnh hưởng bởi reviews và uy tín; điều này gợi ý rằng số lượng review có thể kéo bạn đến một shop “dễ thấy,” nhưng kỹ thuật đọc sâu giúp bạn chọn shop “đáng tin.”

FAQ

These questions address common edge cases where comparing review score vs review volume feels confusing, especially when you’re trying to decide quickly.

If two shops have the same score, should I pick the one with more reviews?

Yes, usually—higher volume provides stronger evidence that the score reflects typical outcomes, not a short burst of happy customers; then break the tie by recency and repair-type relevance.

What if a shop has fewer reviews but they’re extremely detailed?

Detailed reviews can partially compensate for low volume, especially if they include invoices, photos, timelines, and long-term outcomes; still, treat it as higher risk unless you can confirm consistency across multiple recent reviewers.

Should I ignore older reviews completely?

No—older reviews help you understand long-term patterns, but you should weight recent reviews more because staff, policies, and quality control can change; use older reviews to identify “always true” traits like communication style or honesty.

How do I use negative reviews without overreacting?

Read them for patterns and for shop behavior: one complaint about a delay is normal, but repeated complaints about misdiagnosis or refusal to honor warranty is a structural risk; owner responses often reveal whether the shop resolves problems or escalates conflict.

Can I decide from reviews alone?

You can shortlist from reviews, but before committing to a major repair, call and ask one process question—how estimates and approvals work—because transparency is often the hidden differentiator between a good experience and a costly surprise.