Cleaning only counts as “improved” when you can show a measurable drop in residues and/or microbes at the same risk points, using the same sampling method, against a defined pass/fail limit. That means you don’t rely on “looks clean”—you rely on numbers you can trend.

Then, you need a repeatable before-and-after plan: where you swab, when you swab (pre-op vs post-clean), how you swab (area, pattern, pressure), and what “pass” means for each zone and surface type—so results are comparable shift to shift.

Next, you choose the right microbiological checks to confirm what ATP can’t: indicator organisms, total counts, and—when risk demands it—pathogen or environmental monitoring methods that match the hazard and the product category.

Introduce a new idea: once the method is stable, your goal shifts from “Did today’s cleaning work?” to “Are we sustaining improvement over time?”—with trend rules, escalation triggers, and corrective actions that predictably move the numbers.

What does “verified cleaning improvement” mean (and what does it NOT mean)?

Verified cleaning improvement means you can document a statistically and operationally meaningful reduction in contamination indicators (ATP/RLU, protein/allergen residue, and/or microbial counts) at defined control points, using the same method, with results that meet your acceptance criteria over time.

To better understand why that definition matters, focus on what “verified” excludes: a one-off good day, a subjective visual check, or a metric that is not comparable because the method changed.

What counts as “improvement” in QA terms?

Improvement is repeatable capability, not a single reading. In practice, QA teams usually treat improvement as a combination of:

- Level shift: median/mean results improve (e.g., post-clean RLUs drop materially vs baseline).

- Reduced variation: fewer spikes and fewer borderline results.

- Higher first-pass rate: more sites pass without re-clean.

- Lower risk at the “hard spots”: improvements show up at hygienic design pain points—gaskets, threads, niches, drains, filler heads.

If you can’t show at least one level shift plus stability (variation reduction) at the same sampling points, you have not verified improvement—you have observed a possibly lucky outcome.

What verified improvement is NOT

A program can look better while being unchanged (or worse) if the method is biased. Common false signals include:

- Swabbing “easy” spots (flat, accessible panels) instead of true risk points.

- Changing swab area (e.g., 10×10 cm one day, “about the size of a hand” the next).

- Sampling at different times (immediately post-sanitize vs after re-soiling/production start).

- Cleaner chemistry interference with ATP signals (quenching or inflating readings). Research has shown disinfectant chemistry can interfere variably with ATP meter readings. (journals.plos.org)

Why ATP is useful—but limited

ATP is a powerful process control tool because it’s fast and sensitive to organic residues (food soils, bio-residues). But ATP:

- Does not directly identify specific microbes or pathogens.

- Can be influenced by non-microbial ATP (food residues) and by chemical residues from cleaners/sanitizers.

- Requires site-specific thresholds and consistent technique to stay meaningful.

This is why strong programs use ATP for rapid verification and periodic microbiological confirmation—especially for high-risk lines and zones.

How do you run a before-and-after ATP swab plan that proves improvement?

A before-and-after ATP swab plan proves improvement by using the same high-risk sampling map, the same timing, and the same swabbing technique to demonstrate lower post-clean RLUs across enough samples to rule out chance—then locking that method into routine monitoring.

Next, you convert “we should swab more” into a controlled design: points, frequency, limits, and decision rules.

Site mapping: where to swab (and why “control points” beat random swabs)

Start with a cleaning verification map that matches your hygienic zoning and hazard analysis:

- Zone 1 (food/product contact): direct contact surfaces (belts, fillers, chutes, hoppers).

- Zone 2 (close-to-contact): framework near Zone 1, guards, tool holders.

- Zone 3–4 (non-contact / remote): floors, drains, forklifts—still important for environmental monitoring.

Within each zone, choose control points that are:

- High soil load or high touch

- Hard to clean (crevices, gaskets, threads)

- Historically linked to deviations, complaints, or positives

A strong plan typically includes a mix of “typical” points and “worst-case” points. If your “worst-case” points improve, you can trust the cleaning method change.

Timing: when to swab for true before/after comparability

To show improvement (not randomness), standardize:

- Before sample: after production (or controlled soiling), before pre-rinse/foam (define this clearly).

- After sample: after the full cleaning cycle and after the required contact time, with rinse status defined (ATP can be skewed if sanitizer chemistry remains).

If operations require “pre-op” checks, define “after” as just before start-up, not “immediately after sanitation,” unless that’s the validated practice.

Technique: how to swab so results mean the same thing every time

ATP is technique-sensitive. Standardize:

- Swab area: e.g., 10×10 cm (or a frame) whenever possible.

- Pattern: cross-hatch with consistent strokes.

- Pressure and angle: train and periodically re-qualify techs.

- Surface state: wet vs dry affects pickup; define what you do and stick to it.

- Labeling: point ID, zone, time, operator, cleaning cycle ID.

Limits: how to set pass/fail thresholds without guessing

Avoid copying another plant’s RLU limits. Instead:

- Baseline study: collect enough after-clean swabs over multiple days/shifts (and ideally multiple operators).

- Stratify by surface type & zone: stainless steel ≠ plastic ≠ rubber gasket.

- Pick a rule that matches risk: common approaches include percentile-based limits (e.g., 80th/90th percentile of “good” performance) plus a tighter “target” limit for continuous improvement.

- Verify with micro: ensure your ATP pass level is not masking microbial risk.

In an 8-month monitoring study at a university canteen, researchers from Polytechnic University of Marche (Department of Agricultural, Food and Environmental Sciences) reported a very strong association between mean ATP readings and viable counts of total mesophilic aerobes (reported correlation r = 0.99), supporting ATP’s value for routine verification when used with defined limits. (pmc.ncbi.nlm.nih.gov)

Proving “improvement,” not just “control”

To prove improvement, run a controlled comparison:

- Keep points and method identical

- Change one thing at a time (chemistry, time, temperature, mechanical action, tool)

- Compare distributions (median + spread), not just averages

- Document the new standard work when the shift is real

And yes—this mindset applies beyond sanitation. The same “verify, don’t guess” logic shows up in maintenance work like fuel injector cleaning, where before/after data (symptom change + measured outputs) is more trustworthy than subjective impressions.

Which microbiological tests best confirm cleaning improvement (and when should you use them)?

The best microbiological tests to confirm cleaning improvement are indicator organism counts and targeted environmental monitoring that align to your hazard and zone—because they measure what ATP can miss: viable organisms and harborage-driven persistence.

Moreover, the right test depends on whether you’re validating a cleaning step, monitoring routine control, or investigating a failure.

Indicator organisms vs pathogen tests: what to choose first

Start with indicator organisms for routine confirmation because they’re practical and trendable:

- Total aerobic counts (APC/TVC): broad indicator of general hygiene.

- Enterobacteriaceae / coliforms: stronger signals of fecal/environmental hygiene breakdown in many food contexts.

- Yeast & mold: relevant for certain products/environments.

Use pathogen-focused methods when risk and history justify it:

- Listeria spp. environmental monitoring in RTE environments (Zone 2–3 emphasis).

- Salmonella environmental monitoring in relevant operations.

- Product- and line-specific pathogen hazards as defined by your HACCP/FS plan.

Swab, sponge, contact plate: picking the right sampling approach

Match the sampling tool to the surface and the question:

- Swabs: great for small, irregular, creviced spots (threads, hinges).

- Sponges: better for larger areas and textured surfaces.

- Contact plates: good for flat surfaces but limited in niches and wet areas.

Your micro results are only as good as your recovery. That’s why programs often standardize area using sampling frames and train operators on pressure and coverage.

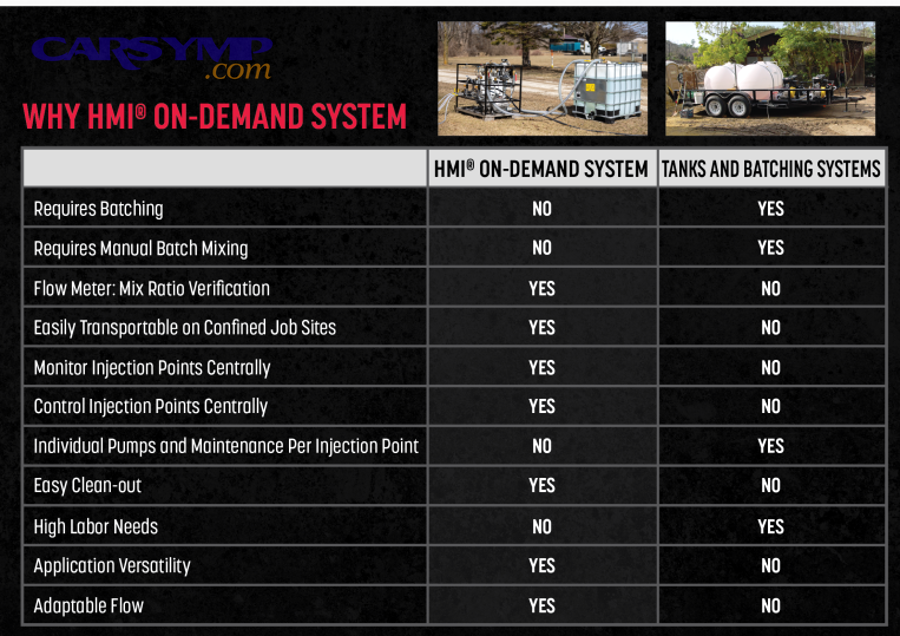

A practical method stack: ATP + micro + residue tests

Here’s a useful way to think about tools . This table summarizes what each method tells you, what it misses, and when it fits best.

| Method | What it detects well | What it can miss | Best use in an improvement program |

|---|---|---|---|

| ATP (RLU) | Organic residue, overall “cleanliness signal” | Low-level viable microbes; chemical interference | Fast verification, daily control, training feedback |

| APC/TVC swabs | Viable aerobic load trends | Specific pathogens; slow turnaround | Routine confirmation, trend proof of improvement |

| Indicator organisms (e.g., coliforms/EB) | Hygiene breakdown signals | Non-target organisms | Targeted confirmation, zone-based monitoring |

| Allergen/protein residue | Specific residues relevant to allergen control | Microbial risk | Changeover validation, allergen risk reduction |

Evidence that ATP and micro can track together (when done right)

A study by University of Nebraska–Lincoln (Department of Nutrition and Health Sciences) reported a strong linear relationship between ATP bioluminescence readings and aerobic plate counts on plastic cutting boards under controlled conditions, supporting ATP as a practical sanitation monitoring tool when paired with good method control. (charm.com)

The key phrase is “when paired with good method control.” ATP is most reliable as a trend tool with standardized technique and periodic culture-based confirmation.

How do you prove improvement over time (not just after one good cleaning)?

You prove improvement over time by turning your ATP and microbiological results into a stable, trended control system—complete with baselines, control limits, and escalation rules—so you can show the cleaning process stays capable across shifts, weeks, and equipment states.

Especially, focus on sustained performance at the hardest points, because that’s where real risk lives.

Build a baseline and define what “normal” looks like

Before you claim improvement, establish:

- A baseline window (e.g., 2–4 weeks of routine data)

- A clear sampling schedule by zone and equipment family

- A consistent data structure (point IDs, line, shift, operator, cycle)

Then define:

- Target: “great performance” level (drives continuous improvement)

- Limit: pass/fail threshold (drives immediate action)

- Alert level: early warning (triggers checks before failure)

Trend rules that prevent “gaming” and false confidence

Use simple, auditable rules such as:

- 2 consecutive alerts at the same point → focused re-clean + supervisor review

- 3 failures in 10 samples for a zone → deep dive (chemistry, time, mechanical action)

- New spike after change (new chemical, new tool, new operator) → re-qualification

You can also segment by:

- Equipment state: new gaskets vs aged gaskets

- Changeovers: allergen vs non-allergen runs

- Seasonality: humidity/temperature effects for yeasts/molds

Show causality: tie improvements to specific changes

To prove you caused improvement, link data to a defined intervention:

- Increased mechanical action (brush type, foaming method)

- Improved disassembly access

- Optimized detergent concentration and contact time

- Better rinse step to reduce chemistry interference

- Hygienic design fixes (replace cracked gasket, eliminate dead leg)

Then confirm: the “after” distribution stays improved for multiple cycles, not just one day.

Guard against chemical interference

ATP readings can be altered by cleaner-disinfectant chemistry; different chemistries can quench readings variably across meters. (journals.plos.org)

Operationally, this is why many QA plans specify a consistent post-clean state (e.g., after rinse and drain time) before swabbing.

What corrective actions actually drive measurable cleaning improvement?

Corrective actions that drive measurable cleaning improvement are the ones that change the inputs of cleaning performance—mechanical action, chemistry, time, temperature, and access—then verify the effect at the same control points until the trend shifts and stabilizes.

In addition, the best corrective actions remove the reasons contamination persists: harborage, recontamination, and inconsistent execution.

Fix the system, not the symptom: a corrective action playbook

- Standardize execution

- Visual work instructions with disassembly photos

- Tool control (right brush/pad for the right surface)

- Operator qualification and periodic re-checks

- Increase access to the hard spots

- Remove guards, open hinges, add quick-release clamps

- Replace worn gaskets that trap soil

- Redesign or cap hollow rollers/tubing ends

- Optimize chemistry

- Confirm concentration (titration or test strips where applicable)

- Ensure correct contact time

- Confirm water hardness impacts and adjust

- Improve mechanical action

- Foam coverage + manual scrub at niches

- CIP flow/turbulence checks

- Replace ineffective tools (worn brushes, degraded pads)

- Prevent recontamination

- Separate clean/dirty tools and traffic flows

- Drying and storage controls

- Post-clean handling rules (gloves, touch points)

Don’t forget the cost lens (without letting it distort verification)

Teams often ask what the “right” investment is. You can frame costs as:

- Low cost: better training + better access + better tools

- Medium cost: chemistry optimization + data system + more frequent verification

- High cost: hygienic design redesign or equipment replacement

That logic also shows up in maintenance examples people recognize. For instance, an Injector cleaning cost estimate can look “cheap” compared to replacing injectors—but the disciplined approach is still to verify outcomes with consistent metrics, because DIY injector cleaning risks can include inconsistent results, collateral damage, or symptoms returning if the root cause (fuel quality, deposits, filtration) isn’t addressed. Likewise, in plants, re-cleaning is “cheap” until it becomes chronic—then hygienic design fixes often deliver the real, measurable shift.

To keep that improvement from fading, apply simple Fuel quality and injector health tips equivalents inside sanitation programs too: control inputs (chemistry quality, water hardness, tool condition), avoid shortcuts, and verify regularly so small drifts don’t become big failures.

What can make you think cleaning improved when it didn’t?

Several factors can make cleaning look improved when it isn’t: biased sampling, inconsistent technique, chemistry interference, and “moving the goalposts” by changing thresholds or timing—so the numbers improve while the real risk stays the same.

Next, treat these as failure modes and build controls against them.

The 7 most common false “improvement” traps

- Sampling bias

- Swabbing easier spots, not true control points

- Timing drift

- Swabbing earlier/later than the baseline method

- Technique drift

- Smaller area, lighter pressure, fewer strokes

- Threshold drift

- Quietly raising the pass limit to reduce failures

- Chemistry interference

- Residual sanitizer affecting ATP signal (often meter- and chemistry-dependent) (journals.plos.org)

- Equipment condition changes

- New gasket temporarily improves results; worn parts slowly bring failures back

- Data blindness

- Looking only at averages, not variation and spikes

A simple “proof checklist” QA can use

Before claiming “cleaning improved,” confirm you can answer “yes” to all:

- Same points, same method, same timing

- Defined limits documented and unchanged

- Distribution improved (level + variation), not just one-off passes

- Micro confirmation supports the direction of change

- Improvement holds across shifts/operators

Evidence (why verification programs combine tools)

In routine monitoring of food contact surfaces, researchers from Polytechnic University of Marche reported that ATP monitoring was strongly associated with viable counts in their setting while also emphasizing ATP does not replace traditional microbiological analyses—supporting the “combine tools” approach used in robust QA programs. (pmc.ncbi.nlm.nih.gov)